In the past blogs, we have reviewed two options to connect from on-premise to Aviatrix Transits:

- Express Route to Aviatrix Transit – Option 1, where we build BGP over IPSec overlay towards Aviatrix transit. This solution have following constrains:

- Each IPSec tunnel have 1.25G throughput limit

- Azure only support IPSec, not GRE as tunneling protocol

- On-premise device must be able to support BGP over IPSec, also it is manual process to build/maintain IPSec tunnels from on-premise device.

- Express Route to Aviatrix Transit – Option 2, where we utilize Azure Route Server and some smart design to bridge the BGP between Aviatrix Transit, Azure Route Server and ExpressRoute Gateway, then towards on-premise device. This solution have fpllowing constrains:

- ARS can only exchange up to 200 routes with ERGW

- No end to end encryption between on-premsie towards Aviatrix Transit, only MACSec can be used between on-premise devices towards Microsoft Enterprise Edge router.

- Brad Hedlund have an excellent blog about the difference: Securing your network connection to the cloud: MACSec vs. IPSec

- Additional architecture complexity/cost and lose operational visibility, also this solution is in Azure only, means you will end up with different architecture in different clouds.

For enterprises moving business critical applications to multi-cloud, needing point to point encryption without sacrificing the throughput, looking for unified solution that can provides enterprise level visibility, control, audibility, standardization and troubleshooting toolsets. Neither above two solution would be ideal. IPsec is industry standard utilized by all Cloud Service Providers, but how are we able to overcome it’s limitation of 1.25Gbps per tunnel?

Aviatrix’s winning formular solves these challenges with it’s patented technology called High Performance Encryption (HPE). It automatically builds multiple IPSec tunnels over either private connectivity such as express route, or over Internet. Aviatrix then combine these tunnels into a logical pipe, to achieve line rate of encryption up to 25Gbps per appliance.

Aviatrix have several products supports HPE from edge locations: CloudN (Physical form factor), Edge 1.0 and Edge 2.0 (Virtual and physical form factor). They can be deployed on-premise data center, co-location, branch offices or retail locations. These edge devices enable customer enterprise grade visibility and control, monitoring and auditing and troubleshooting capability, as well as providing unified architecture for all major Cloud Service Providers. These solutions enable us easily push all the goodies Aviatrix Transit and Spoke architecture from the clouds towards on-premise.

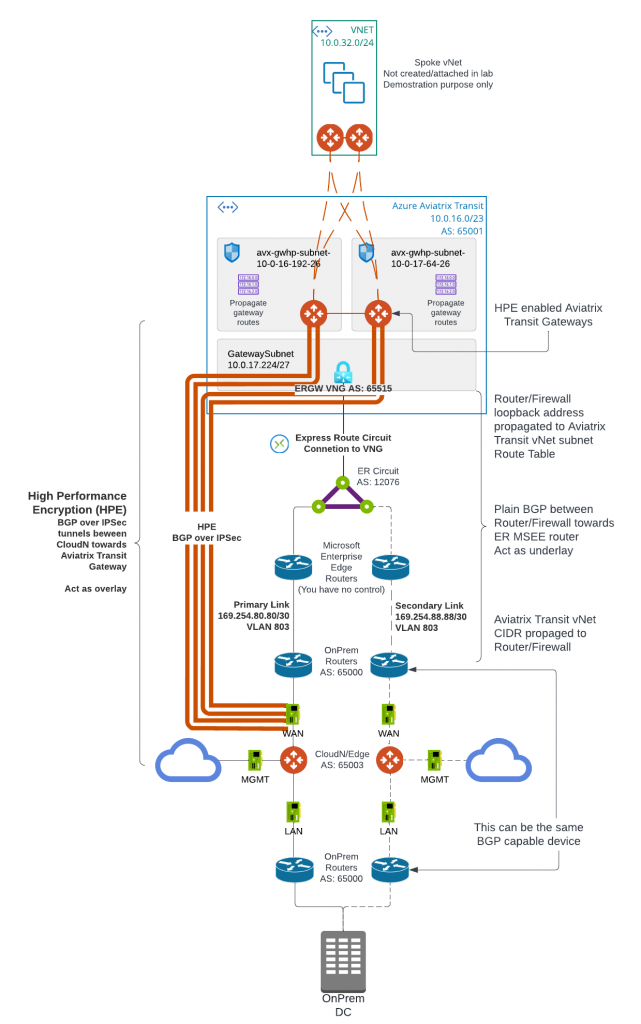

In this blog, we will focus on how CloudN is deployed and connect to Aviatrix Transit. Here below is the architecture diagram:

- Aviatrix Transit Gateway have to be deployed with Insane mode (HPE) enabled.

- The underlay ExpressRoute connectivity has to be build from on-premise device towards Aviatrix Transit Gateway vNet. Follow steps in previous blog post: Express Route to Aviatrix Transit – Option 1

- We don’t need loopback setup, as the HPE tunnels will be established between CloudN WAN interface and Aviatrix Transit Gateway eth0. As such the CIDR range of CloudN WAN interface need to be reachable from Aviatrix Transit Gateway eth0, via it’s subnet route table going towards ExpressRoute Gateway (ERGW) through Microsoft Enterprise Edge Router (MSEE)

- As illustrated in the diagram, the CloudN device have three interfaces:

- eth0 : WAN interface, this is where IPSec tunnels will be built towards Aviatrix Transit Gateways. Then BGP session will be established between CloudN to Aviatrix Transit Gateways.

- eth1: LAN interface, this is where BGP is established between CloudN with on-premise router

- eth2: MGMT interface, this is where you connect to CloudN for management, as well as where CloudN connects to internet for software updates.

A review and validation of underlay setup

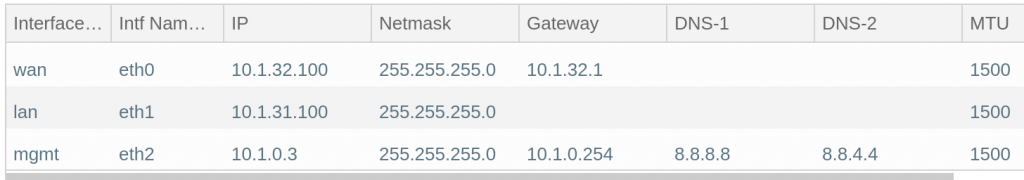

My lab cloudN interface IP address setup

My lab router already have BGP session towards Azure Private Peering primary connection

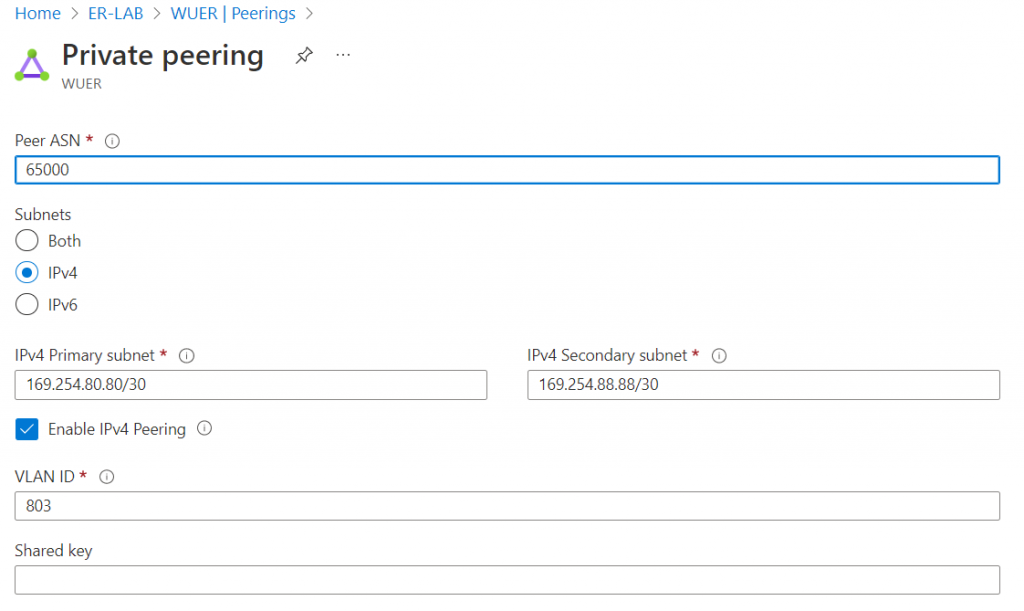

Azure Private Peering Primary subnet range: 169.254.80.80/30. On-premise router will use first IP of 169.254.80.81, and MSEE will use second IP of: 169.254.80.82

On-premise router config.

- GigabitEthernet0/0/0.803 on VLAN 803, assigned IP: 169.254.80.81/30

- On-premise router asn: 65000, neighbor with MSEE 169.254.80.82, ER circuit asn is static: 12076

- On-premise router advertise multiple subnets including CloudN WAN range of 10.1.32.0/24

- On-premise router limit advertisement towards ER peering to only 10.1.32.0/24

interface GigabitEthernet0/0/0.803

description to be connected to an Azure ER circuit

encapsulation dot1Q 803

ip address 169.254.80.81 255.255.255.252

router bgp 65000

bgp log-neighbor-changes

neighbor 169.254.80.82 remote-as 12076

neighbor 169.254.80.82 description Express Route

!

address-family ipv4

network 10.1.30.0 mask 255.255.255.0

network 10.1.30.10 mask 255.255.255.255

network 10.1.31.0 mask 255.255.255.0

network 10.1.32.0 mask 255.255.255.0

neighbor 169.254.80.82 activate

neighbor 169.254.80.82 soft-reconfiguration inbound

neighbor 169.254.80.82 prefix-list router-to-er out

maximum-paths 8

exit-address-family

ip prefix-list router-to-er description Advertise Loopback only

ip prefix-list router-to-er seq 10 permit 10.1.32.0/24

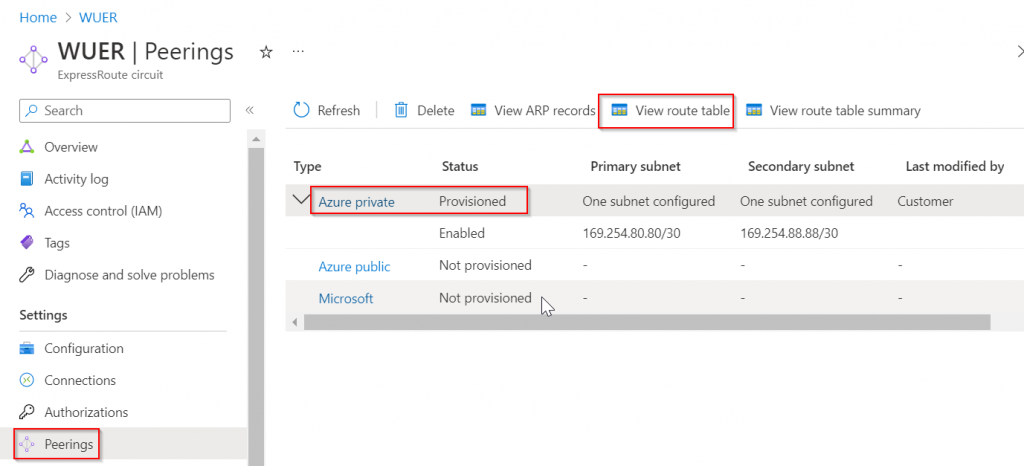

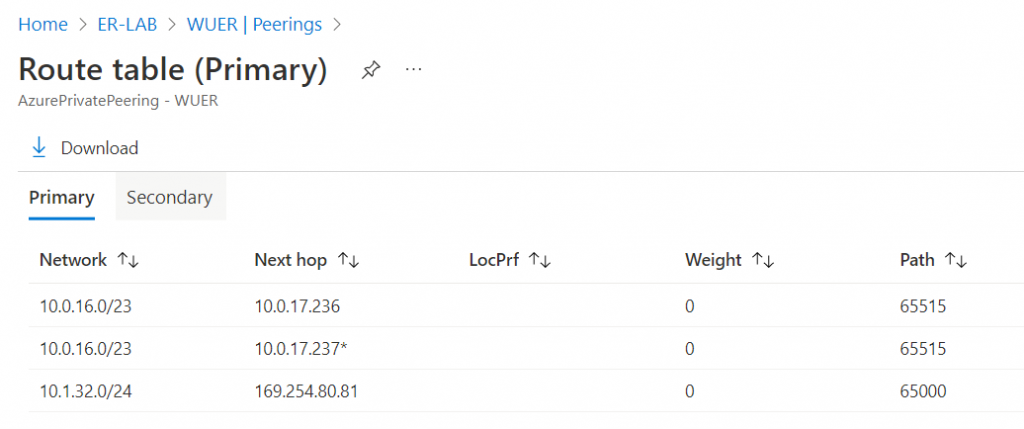

Verify on ExpressRoute Circuit -> Peerings -> Azure Private -> View route table

It received Aviatrix Transit vNet CIDR 10.0.16.0/23 from ERGW of static asn: 65515. It also received CloudN WAN CIDR range of 10.1.32.0/24 from on-premise router asn: 65000

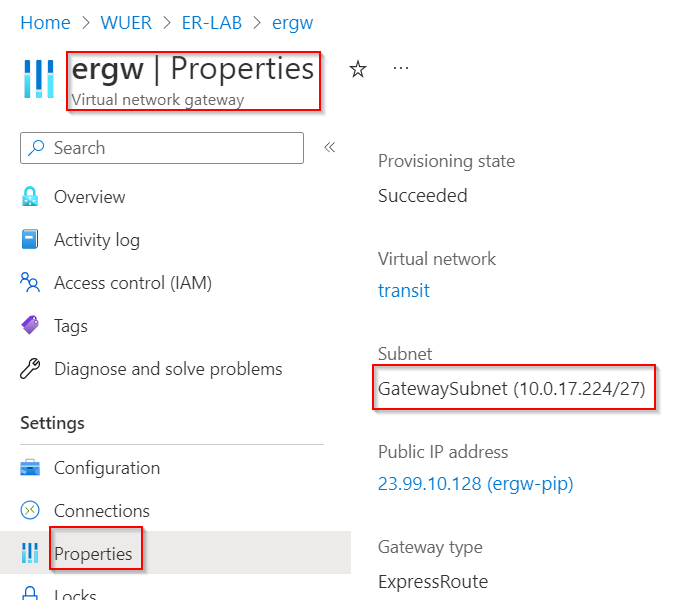

10.0.17.236 and 10.0.17.237 is ERGW, as seen below ERGW is associated with GatewaySubnet of 10.0.17.224/27

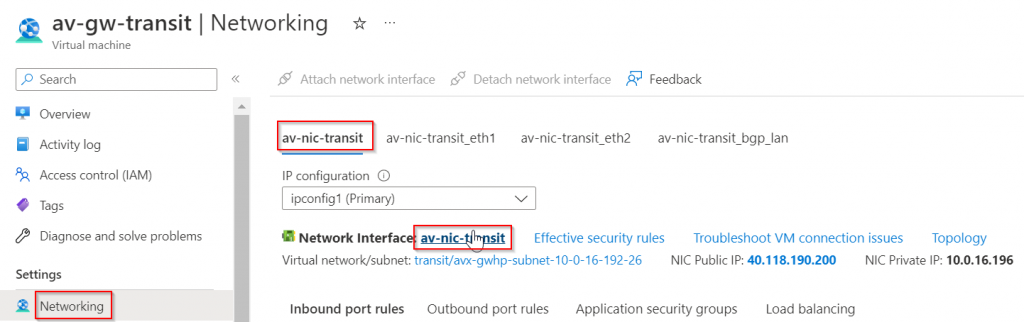

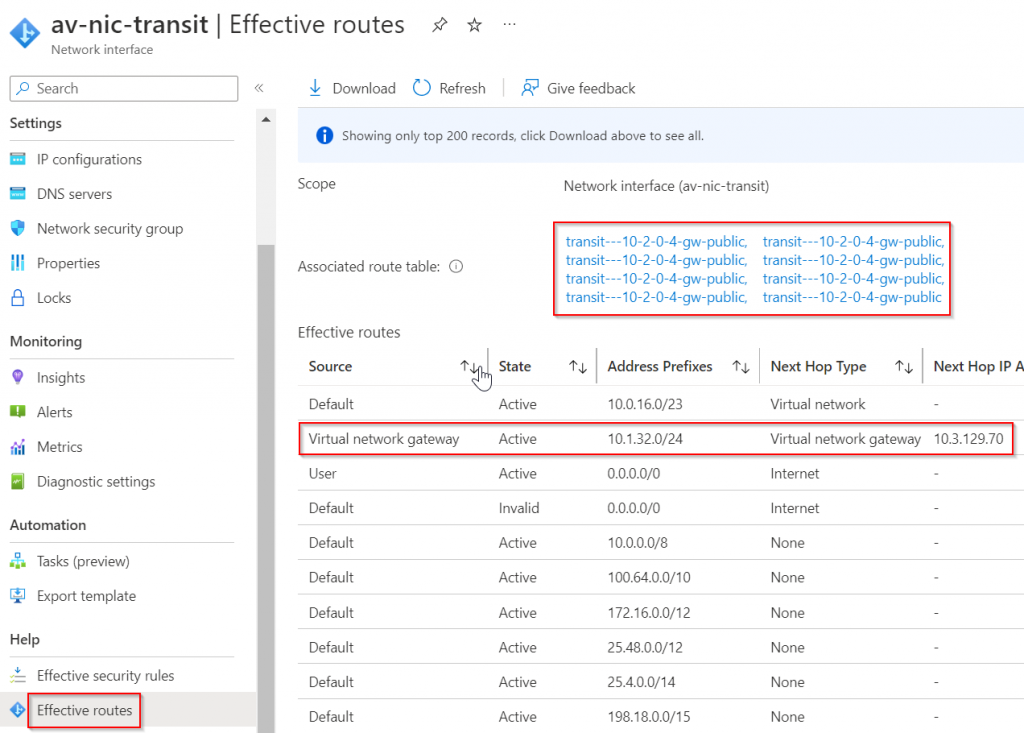

We can also validate on the Aviatrix Transit Gateway eth0 effective route table

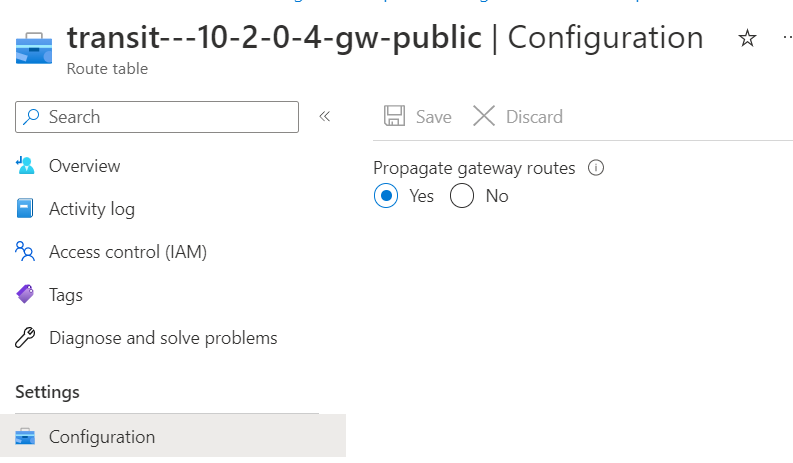

Also remember, the Route table must have Propagate gateway route checked to allow ERGW to program routes received from ER private peering

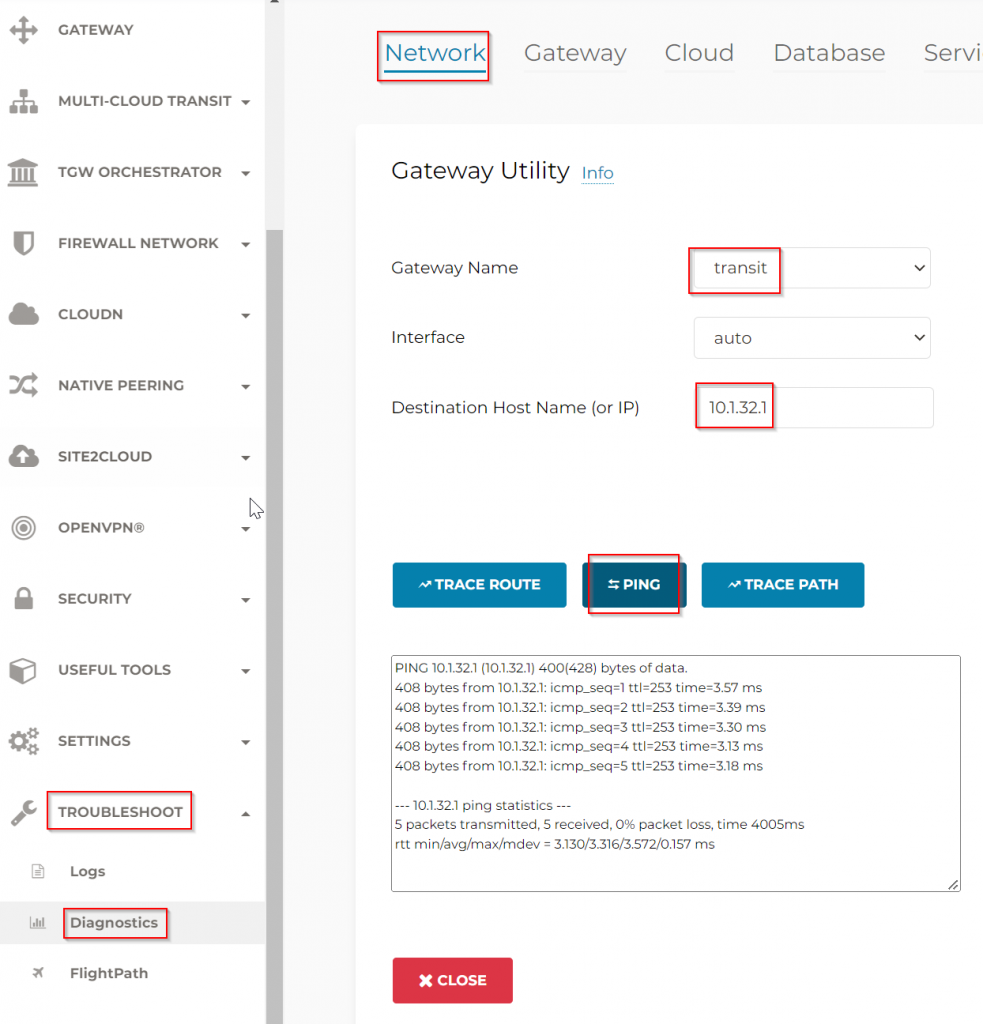

From Aviatrix Transit Gateway ping the default gateway of CloudN WAN interface, this interface is on the on-premise router.

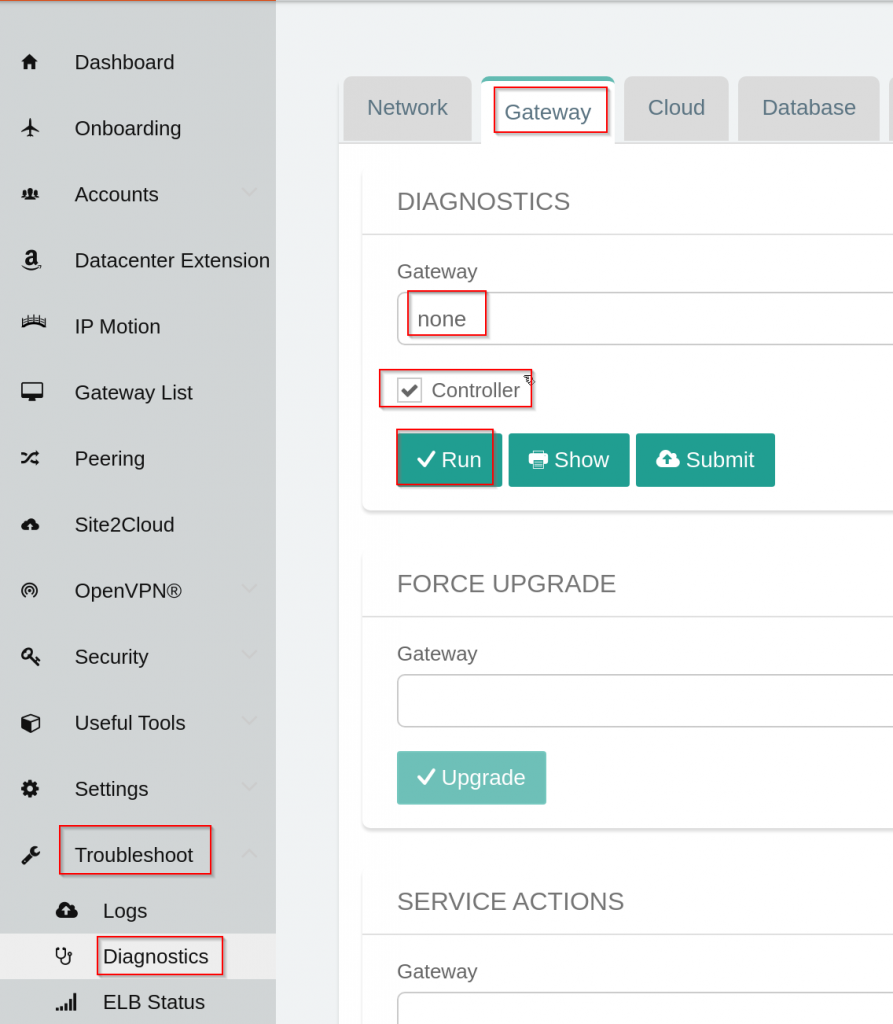

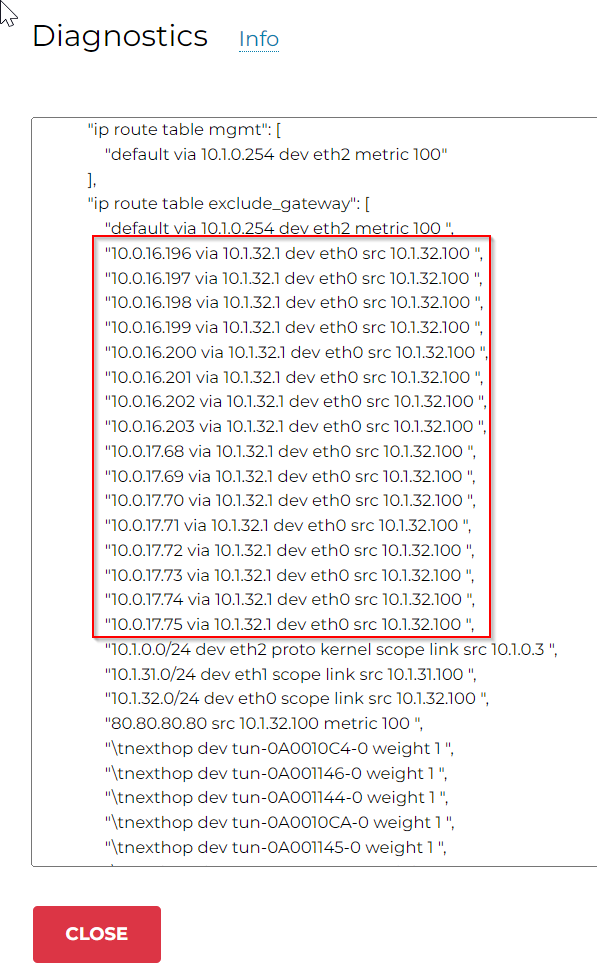

You will not be able to ping CloudN’s WAN interface 10.1.32.100 yet, even though earlier we saw the CloudN WAN interface have a gateway point to on-premise router 10.1.32.1. To explain this, logon to CloudN device itself, when CloudN is not registered with Aviatrix Controller, it’s considered as Stand Alone CloudN, and diagnostics can be performed on the device itself. The interface looks like an older version Aviatrix Controller interface. Troubleshoot -> Diagnostics -> Gateway -> Make sure Gateway is set to none, and Controller checked -> Run

Look at ip rule section

"ip rule": [

"0:\tfrom all lookup local ",

"5:\tfrom all fwmark 0xf4240 lookup mgmt ",

"10:\tfrom all iif lo lookup exclude_gateway ",

"32766:\tfrom all lookup main ",

"32767:\tfrom all lookup default"

],- 0: First it will look at ip route table local, where it won’t find a match for Aviatrix Transit vNet CIDR 10.0.16.0/23

- 5: Then it will look at packet that’s marked 0xf4240, which are packets came from mgmt interface eth0, this won’t be a match either, as the response packet would originate from CloudN itself

- 10: Packet came from loopback or local will use exclude_gateway route table, where it will use 10.1.0.254 as default gateway via mgmt interface

- It will not process further rules as it found a match.

exclude_gateway route table

"ip route table exclude_gateway": [

"default via 10.1.0.254 dev eth2 metric 100 ",

"10.1.0.0/24 dev eth2 proto kernel scope link src 10.1.0.3 ",

"10.1.31.0/24 dev eth1 scope link src 10.1.31.100 ",

"10.1.32.0/24 dev eth0 scope link src 10.1.32.100 ",

"throw 169.254.0.0/16"

],But how Aviatrix will then build IPSec tunnel with Aviatrix Transit Gateway? Read on please

CloudN registration, attachment and validation

As mentioned earlier, CloudN that’s not registered with Aviatrix Controller is considered as Stand Alone CloudN, and you will need to manage everything directly from the CloudN appliance itself, including building Site2Cloud IPSec tunnels.

Once you have registered CloudN to Aviatrix Controller, it became Managed CloudN appliance, all operations should be performed on the Aviatrix Controller. It makes building HPE tunnels as easy as attach Spoke Gateway to Transit Gateway.

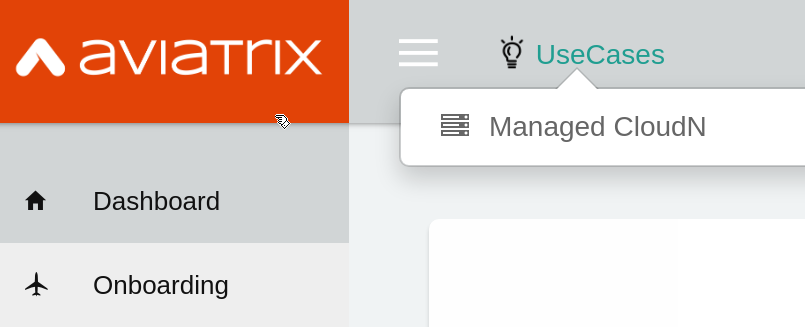

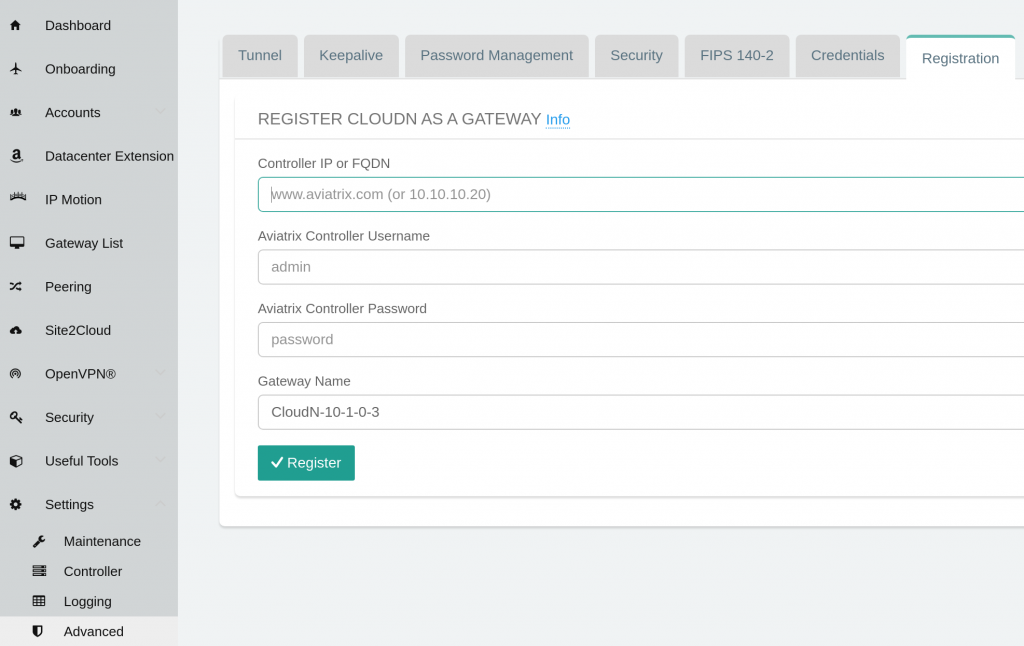

To register CloudN, login to CloudN, click on UseCases -> Managed CloudN

This brings Settings -> Advanced -> Registration page, where you will need to enter Aviatrix Controller IP or DNS name, username and password, as well as pick a name for the CloudN appliance, note:

- Network Security Group on the controller must allow public IP of the mgmt interface access on TCP 443

- The user account need to have CloudN permission

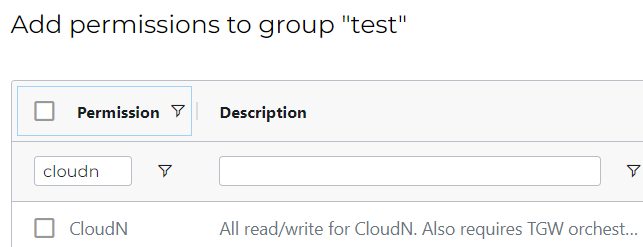

Example of adding CloudN read/write permission to “test” permission group in Controller -> Accounts -> Permission Groups -> Select permission group -> Manage permissions

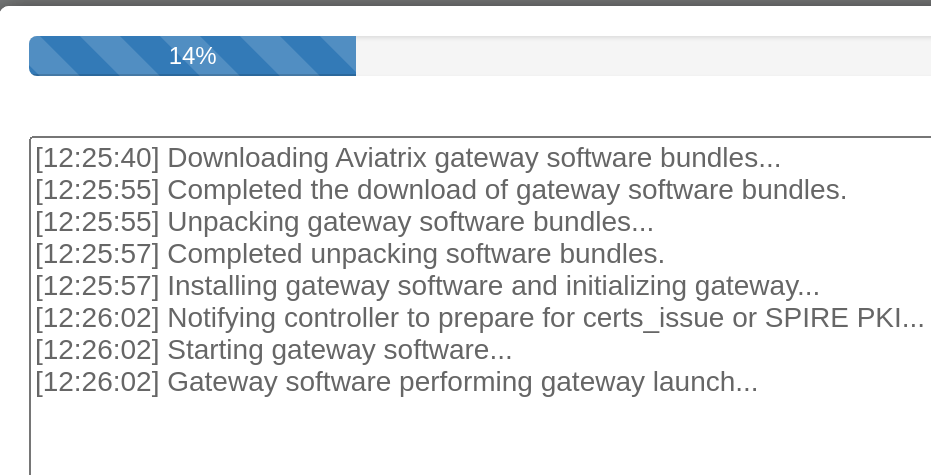

Wait for the registration to complete

The registration process require outbound Internet connectivity. If run into issue, reference to Required Access for External Sites

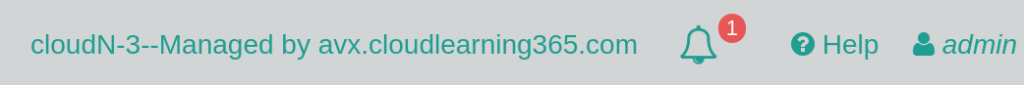

Once registered, the CloudN appliance console top right would indicate the appliance is now managed by Aviatrix controller. All operations going forward need to be performed from Aviatrix Controller.

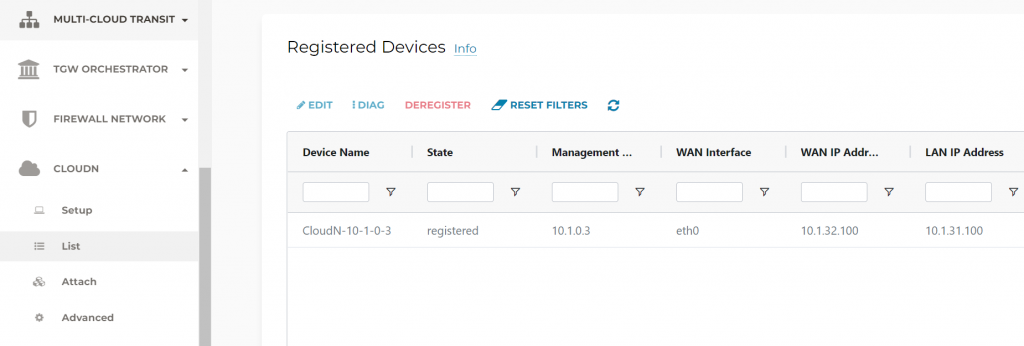

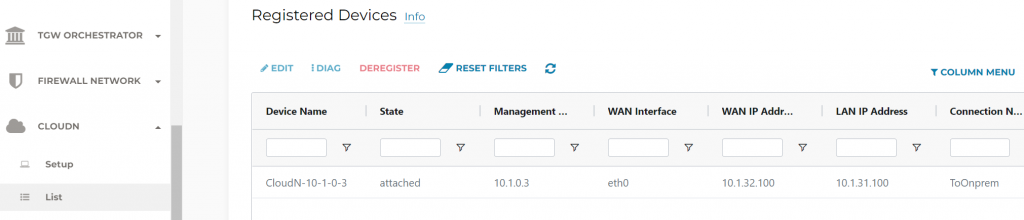

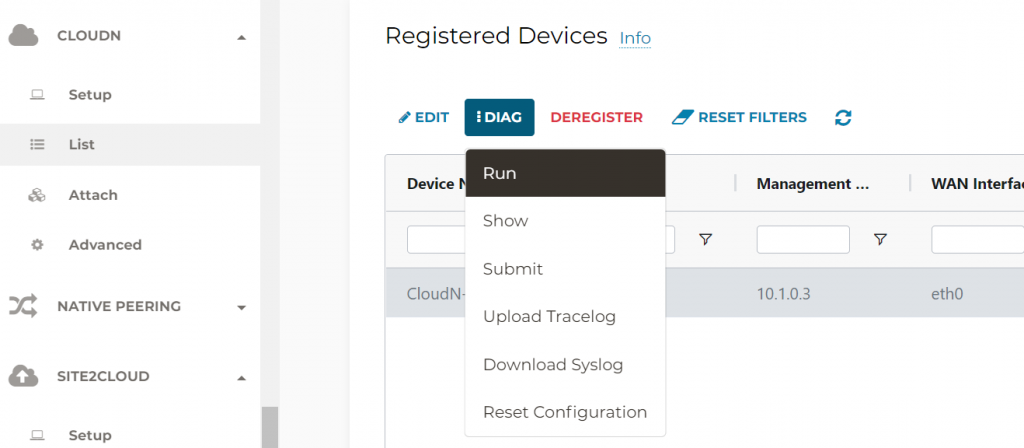

In Aviatrix Controller -> CloudN -> List, you should see the device is registered

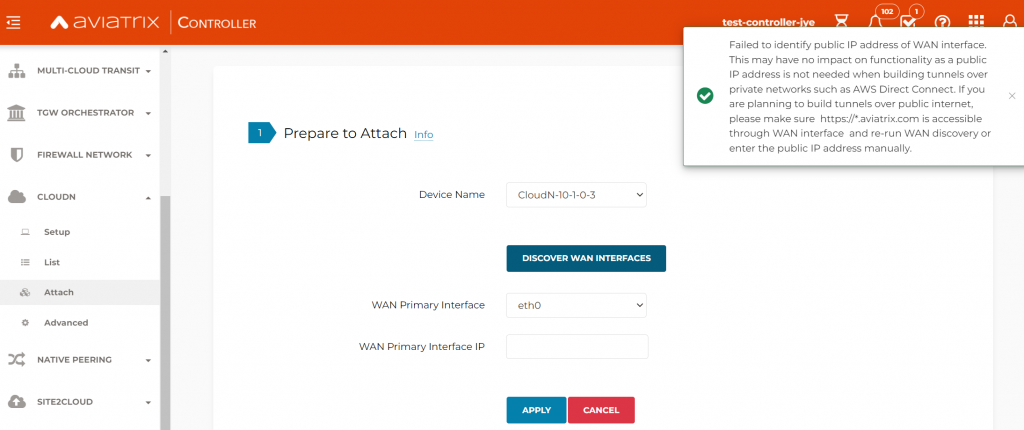

Controller -> CloudN -> Attach -> Prepare to Attach, this step is only necessary for building tunnels over Internet connectivity. We are building the tunnels over ExpressRoute, so this step can be skipped. However if you are building tunnels on Internet and detection failed, you can manually specify the public IP of the WAN port, then click on Apply

On-premise router LAN interface and BGP configuration

- Create loopback interface 88 is for testing purpose

- Note the on-premise router LAN interface is 10.1.31.1 peering with CloudN LAN interface 10.1.31.100

- ip prefix-list limit only loopback 88 gets propagated to CloudN

interface Loopback88

ip address 192.168.88.88 255.255.255.255

interface GigabitEthernet0/0/1.31

description "cloudN-3 LAN"

encapsulation dot1Q 31

ip address 10.1.31.1 255.255.255.0

router bgp 65000

bgp log-neighbor-changes

neighbor 10.1.31.100 remote-as 65003

neighbor 10.1.31.100 description cloudN-3

!

address-family ipv4

network 10.1.30.0 mask 255.255.255.0

network 10.1.30.10 mask 255.255.255.255

network 10.1.31.0 mask 255.255.255.0

network 10.1.32.0 mask 255.255.255.0

neighbor 10.1.31.100 activate

neighbor 10.1.31.100 soft-reconfiguration inbound

neighbor 10.1.31.100 prefix-list to-cloudN out

maximum-paths 8

exit-address-family

ip prefix-list to-cloudN seq 10 permit 192.168.88.88/32

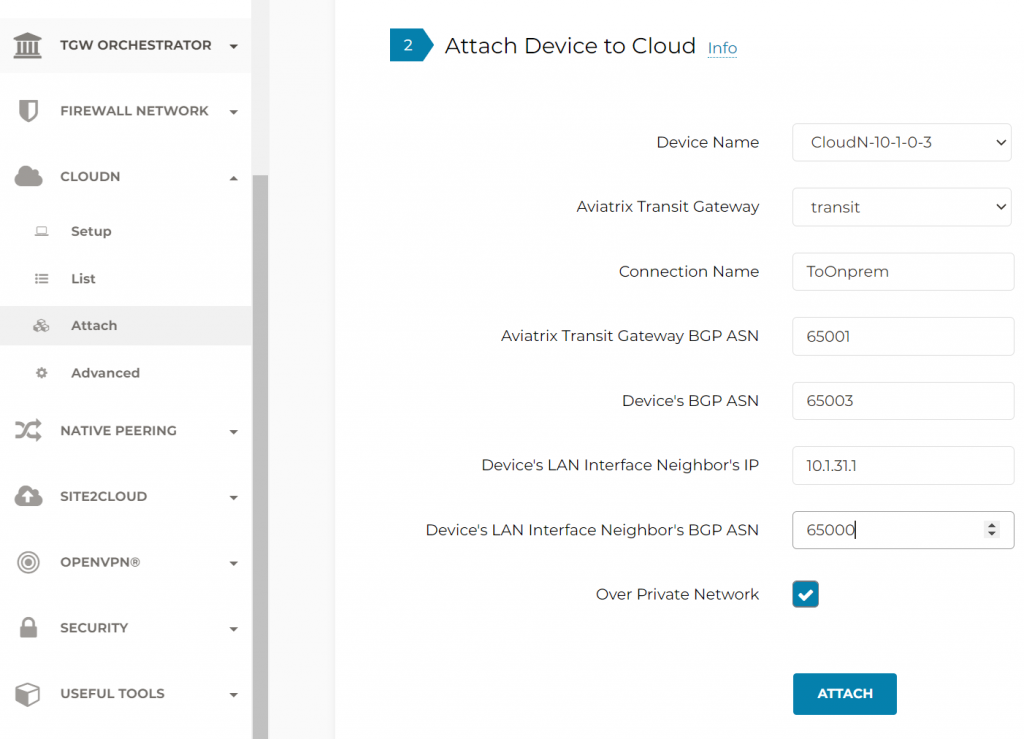

Attach CloudN to Aviatrix Transit, the same workflow also establish BGP with on-premise router. Over Private Network is checked for using ExpressRoute

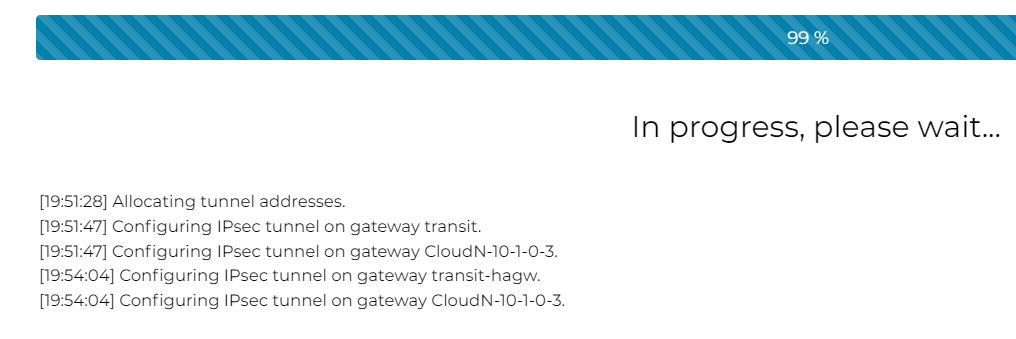

CloudN is building IPSec tunnels towards both Aviatrix Transit Gateways

Once the attach is completed, CloudN -> List -> you can see the CloudN is now attached

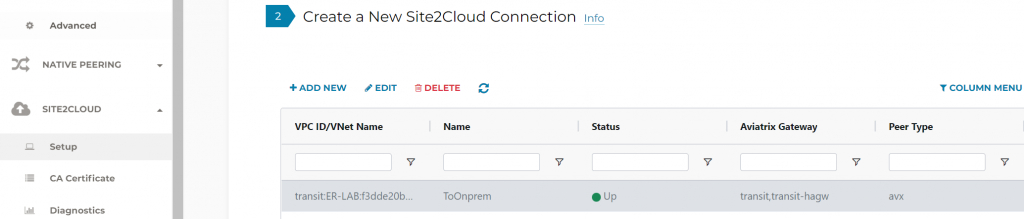

In Site2Cloud -> Setup, we should see the connection from CloudN to Aviatrix Transit Gateways are Up

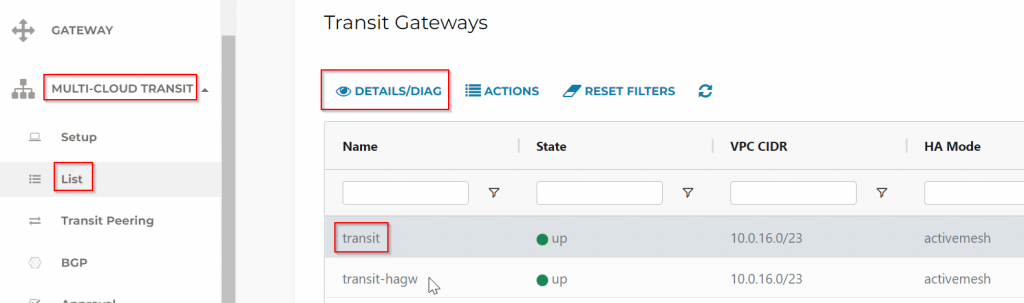

To confirm BGP, Multi-Cloud Transit -> List -> Transit Gateways -> Select a Transit Gateway, then click on Details/Diag

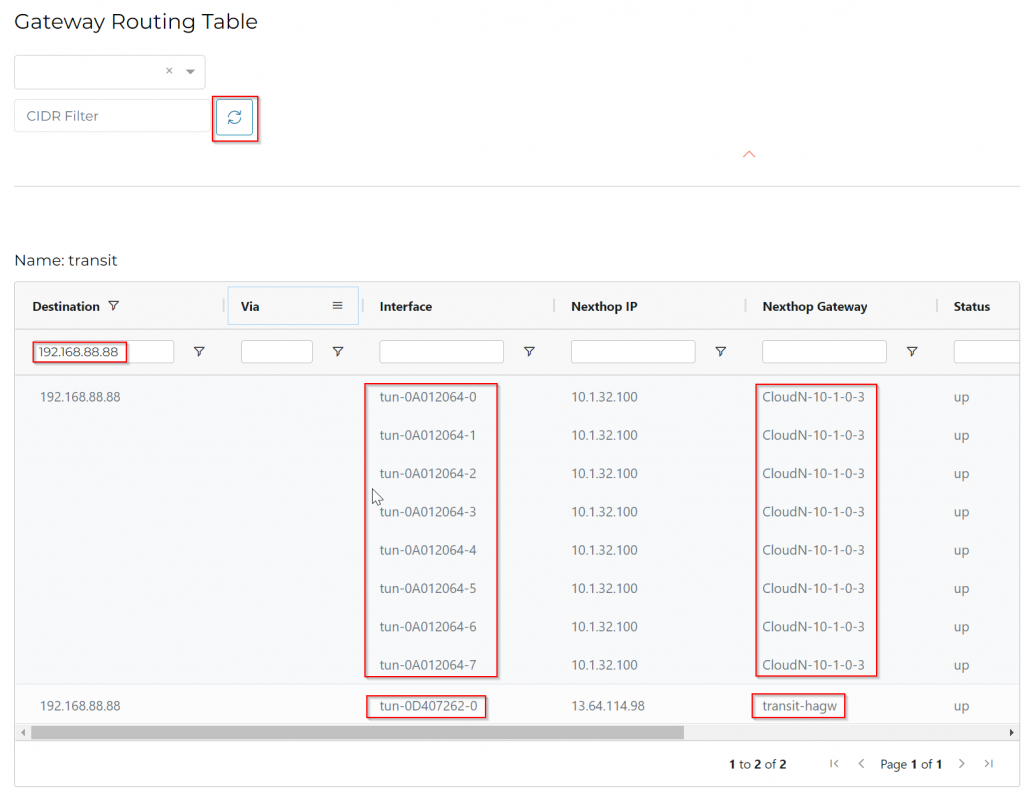

Scroll to Gateway Route Table, then click on the Refresh button.

Search for the 192.168.88.88 loopback we advertised, you can see 8 tunnels been between CloudN towards Aviatrix Transit. Also a tunnel going towards HA gateway for redundant route.

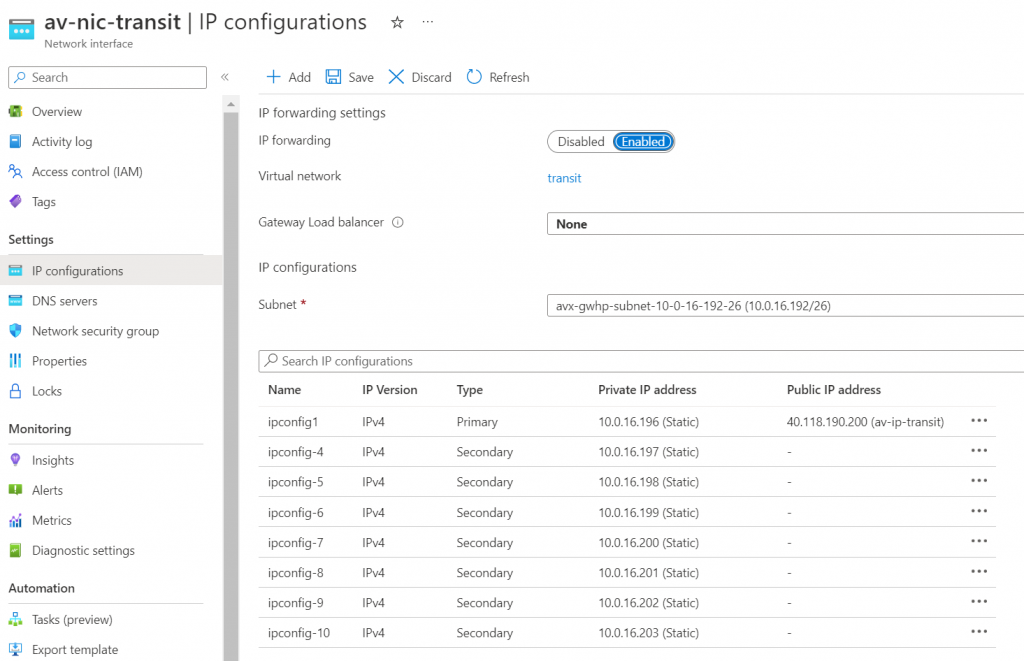

We can observe in Azure Portal, the Transit Gateway have 8 IPs assigned to eth0

Since we have not attached any spoke to the Transit, on-premise router won’t receive any route from Aviatrix Transit yet

show ip bgp neighbors 10.1.31.100 received-routes

Total number of prefixes 0 As described in previous blog, we have multiple way to test this

- Attach an Aviatrix Spoke

- Advertise Transit CIDR

- Gateway Manual BGP Advertised Network List

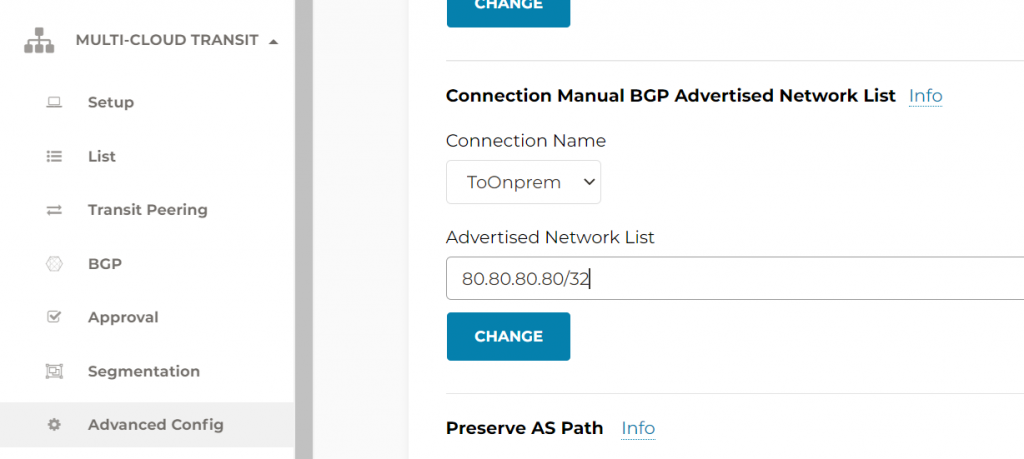

- Connection Manual BGP Advertised Network List

Following example using the last method:

#show ip bgp neighbors 10.1.31.100 received-routes

BGP table version is 10, local router ID is 192.168.77.1

Status codes: s suppressed, d damped, h history, * valid, > best, i - internal,

r RIB-failure, S Stale, m multipath, b backup-path, f RT-Filter,

x best-external, a additional-path, c RIB-compressed,

t secondary path, L long-lived-stale,

Origin codes: i - IGP, e - EGP, ? - incomplete

RPKI validation codes: V valid, I invalid, N Not found

Network Next Hop Metric LocPrf Weight Path

*> 80.80.80.80/32 10.1.31.100 0 65003 65001 i

Total number of prefixes 1

CloudN advanced features

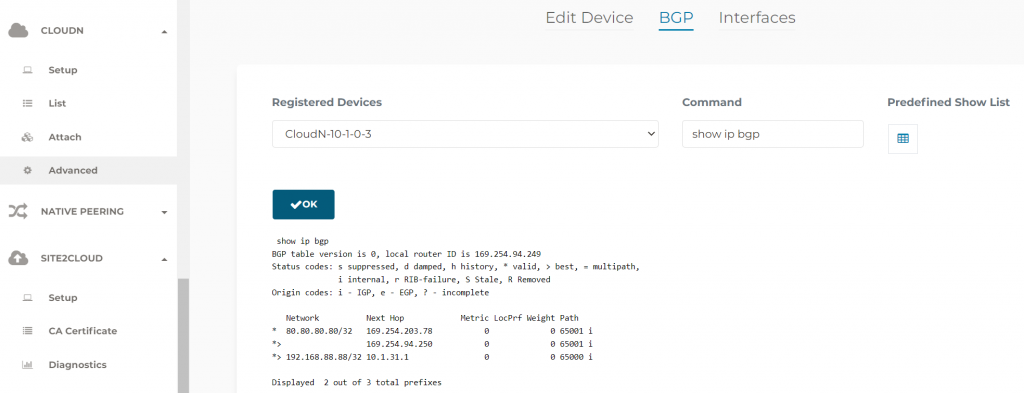

Since now the CloudN is managed by Aviatrix Controller, following operations are done in Aviatrix Controller. CloudN -> Advanced -> BGP -> Pick a command to run, example showing ip bpg on the CloudN itself

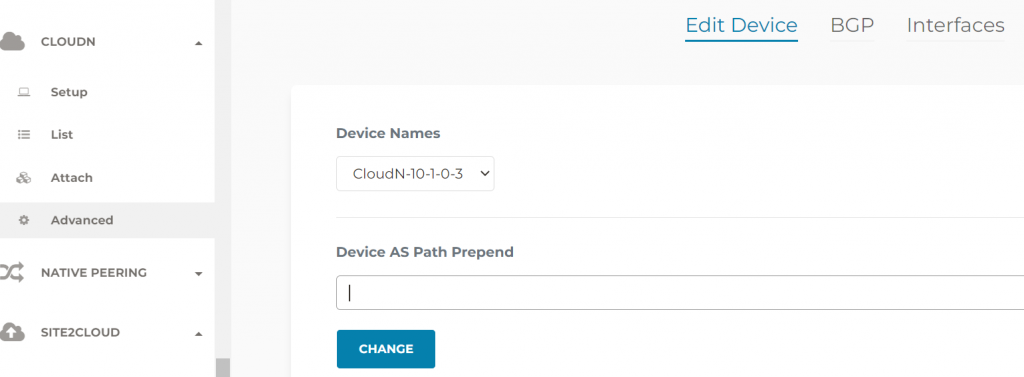

You can also specify AS Path Prepend against a particular CloudN device, this would help with multiple CloudN setup and you want one CloudN to be on the preferred active path

Revisit the priory routing issue between CloudN and Aviatrix Transit Gateway

CloudN -> List -> Select the CloudN device -> Diag -> Run

Aviatrix Controller programmed all Aviatrix Transit Gateways’ eth0 IP address in the exclude_gateway route table pointing to on-premise router, this resolved the connectivity issue between CloudN WAN interface to Aviatrix Transit Gateways eth0 mentioned before.

Conclusion

By utilizing Aviatrix CloudN to connect on-premise to the clouds

- We are able to solve the challenges enterprises are facing when moving mission critical applications to the clouds, by providing unified architecture, enterprise grade visibility and control, monitoring and auditing and advanced troubleshooting capability

- High performance encryption solves the dilemma of choosing amongst security or performance or complexity

- Unified architecture requires less training and will enable workforce quickly onboard secure connectivity to the clouds, and focus what’s really matters to the business.