In the last two blog posts, we discussed two methods for connecting on-premises to Aviatrix Transit via Direct Connect:

- Option 1: Use detached Virtual Private Gateway (VGW) to build BGP over IPSec tunnels with Aviatrix Transit. This solution has following constrains: 1.25Gbps per IPSec tunnel, max 100 prefixes between on-premise and cloud, also potential exposure to the man in the middle attack.

- Option 2: Use attached VGW to build underlay connectivity between on-premise router/firewall and Aviatrix Transit VPC, then use GRE tunnels to build overlay connectivity between on-premise router/firewall to Aviatrix Transit. This solution would provide 5Gbps per GRE tunnel, and bypass the 100 prefixes limitation. However this solution only works with AWS, and still have potential exposure to the man in the middle attack.

Today, more and more enterprises are going into multiple cloud service providers (CSPs). Some due to merger and acquisitions, or partner/ vendor preferences, or simply one CSP provides superior products that are not offered by other CSPs.

Is there a solution that can standardize networking architecture across all CSPs, and provide necessary securities and bandwidths, and more importantly provide enterprise grade features, and also help enterprise obtain day 2 operational excellencies?

If you have followed last two blog posts, we have seen how an tunneling protocol can help overcome the prefixes limitations, how non-encrypted tunneling protocol GRE can provide better throughput than encrypted tunneling protocol IPSec, also that IPSec is been used by all major CSPs.

Can we have both features such as encryption, tunneling and compatibility of IPSec, but also satisfy the bandwidth requirements, and won’t have to deal with building and maintaining so many IPSec tunnels?

My friend, you have just cracked Aviatrix’s winning formular: Insane mode, or High Performance Encryption (HPE), that can automatically build and maintain multiple IPSec tunnels, use either private connectivity or Internet as underlay, and finally combine these tunnels as one large logical connection.

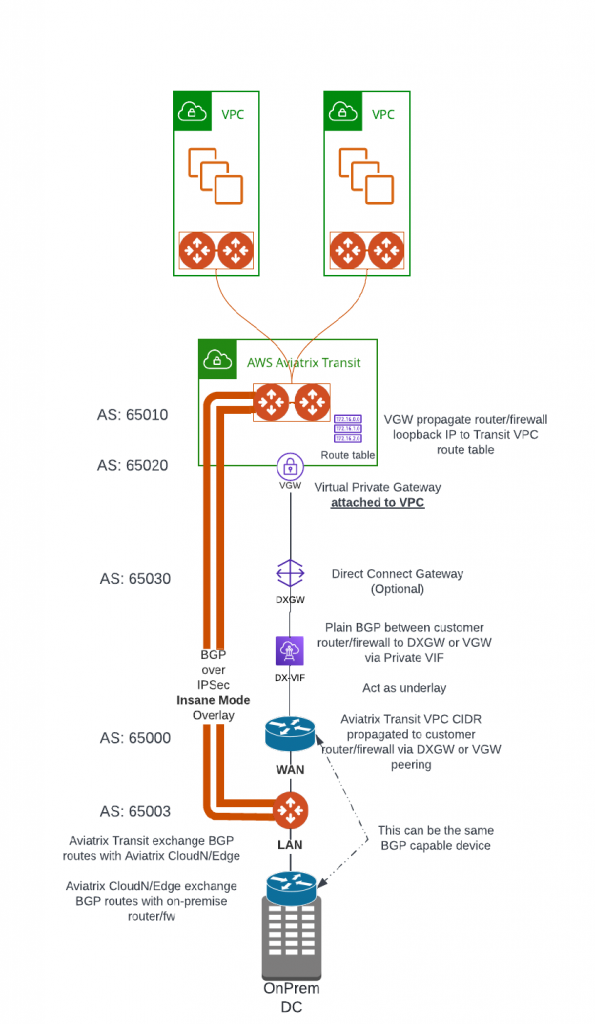

Aviatrix have several products: CloudN/Edge 1.0/Edge 2.0 to help build HPE from on-premise to Aviatrix Transit. Their networking architecture is similar. Following architecture will use CloudN as example, it’s diagram looks very similar to what’s in Option 2

- BGP capable Router/firewall build the underlay connectivity towards Aviatrix Transit VPC.

- CloudN has three interfaces: WAN, LAN and Management

- WAN interface needs to reach Aviatrix Transit Gateways, to automatically build multiple IPSect tunnels with Aviatrix Transit Gateways, then run BGP session on top to exchange route with Aviatrix Transit

- LAN interface needs to exchange BGP with on-premise devices

- Management interface (not shown in diagram) needs to communicate with Aviatrix Controller to be part of control plane operations

- As shown in below diagram, CloudN WAN interface is placed behind the router that establish underlay connectivity to Aviatrix Transit VPC. The same router may have BGP peering with On-Premise devices, in this case CloudN LAN interface can connect to the same router.

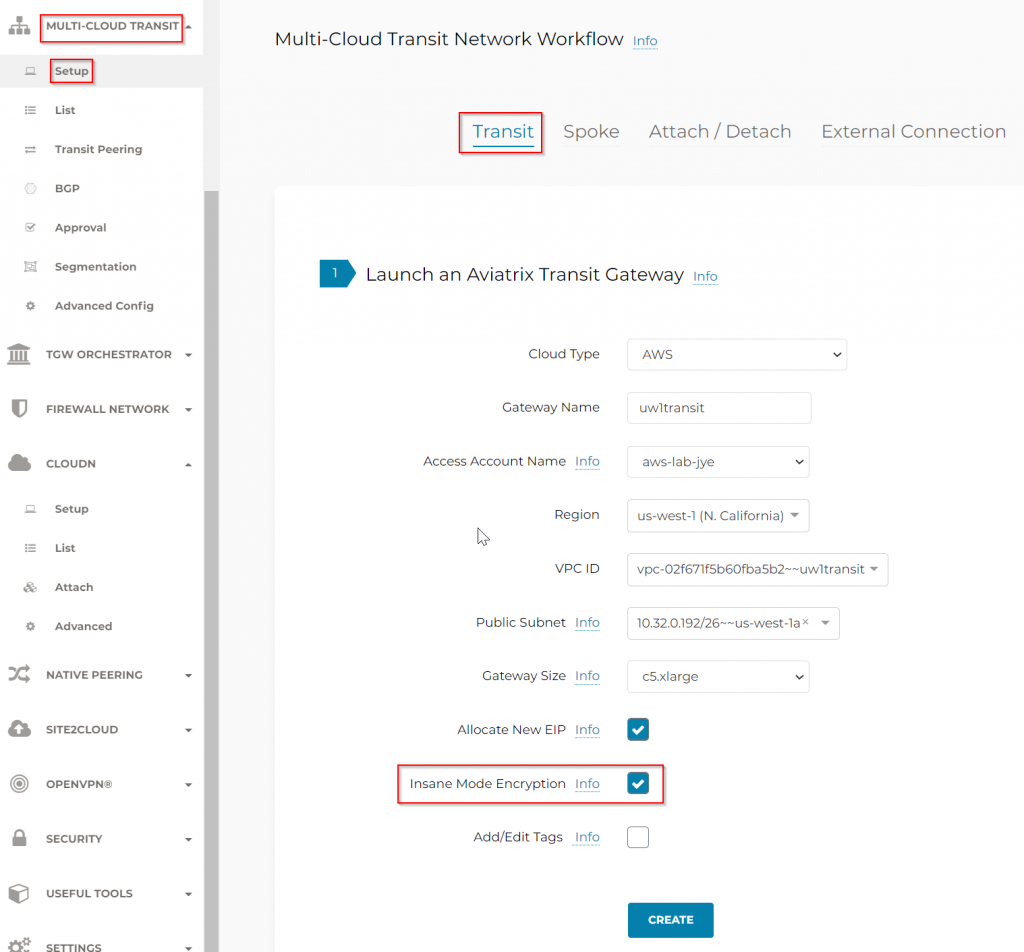

Note: To obtain line rate of private connectivity such as Direct Connect (2.5Gbps and above), during the creation of Aviatrix Transit Gateway, Insane Mode Encryption aka High Performance Encryption must be enabled. Transit Gateway must be recreated if this wasn’t enabled already, which will result in downtime.

Steps to create the connection

Build underlay connectivity

Follow exact steps described in Option 2 to build underlay connectivity

In the end, validate:

- BGP between Router/Firewall to DXGW or VGW via private VIF is up.

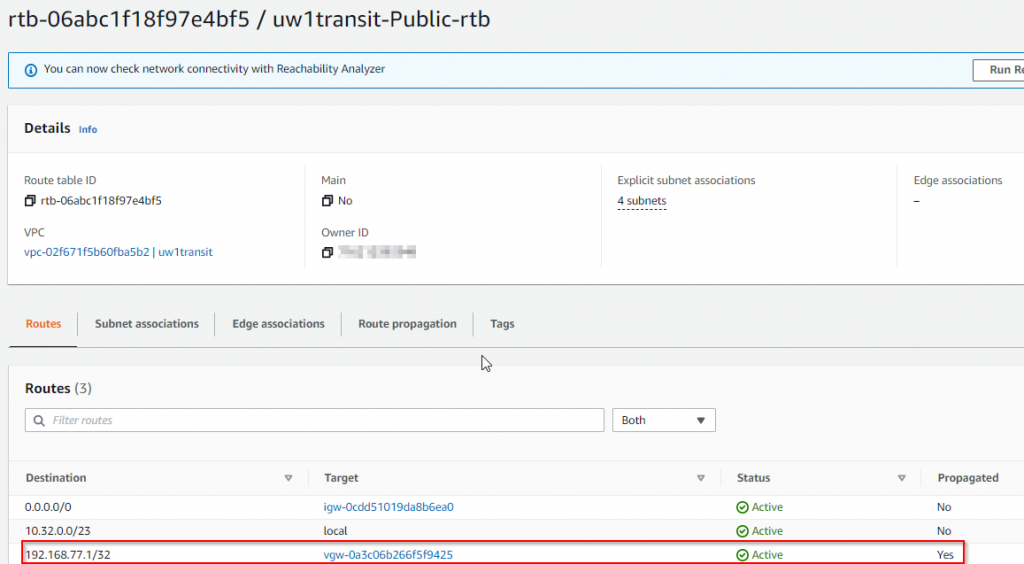

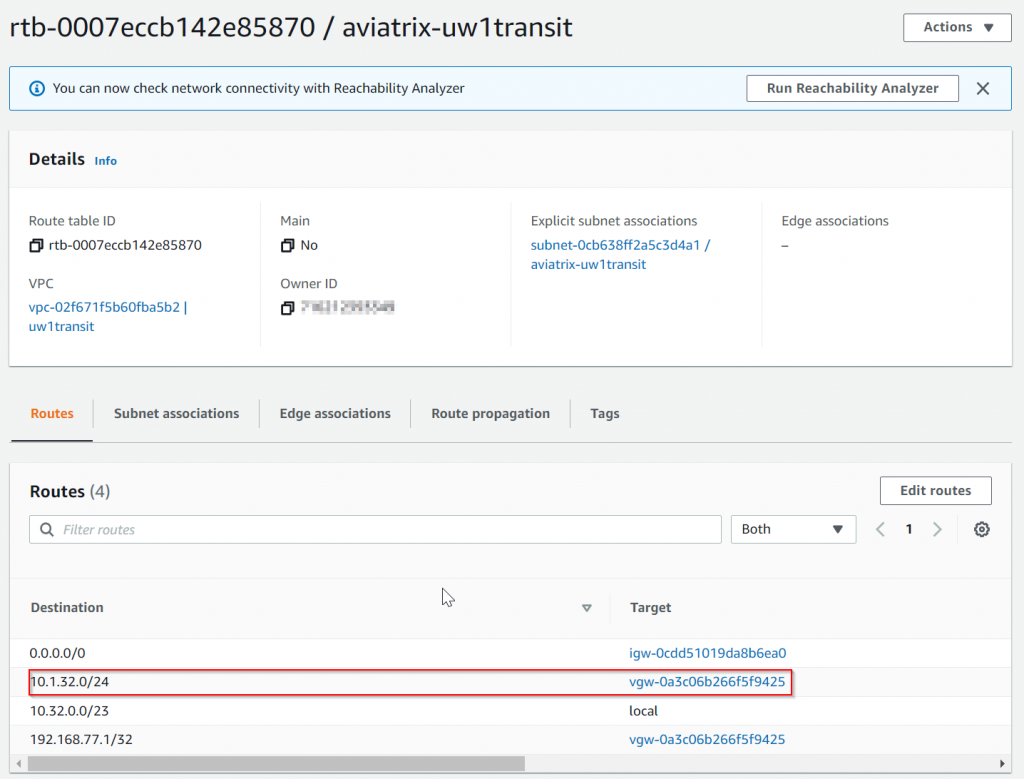

- Router/firewall loopback address (192.168.77.1 in shown example) is propagated to Aviatrix Transit VPC route table

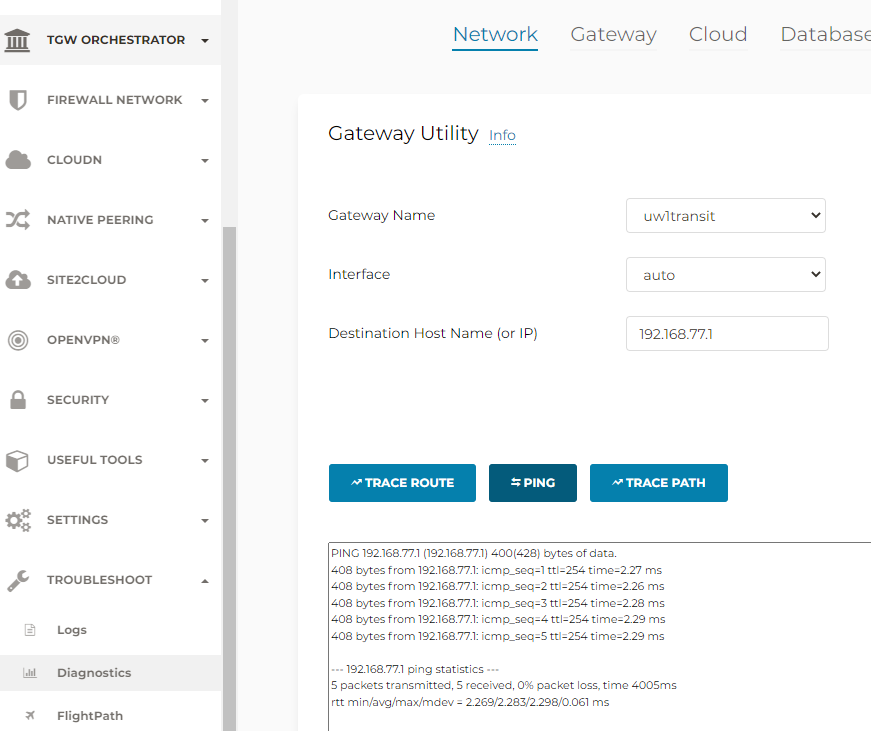

- ping from Aviatrix to Transit Gateway to on-premise router/firewall loopback address to confirm connectivity.

Build overlay connectivity

This part we will follow through CloudN’s workflow. CloudN is a physical server preconfigured by Aviatrix and shipped to customer. The newer Edge 1.0/Edge 2.0 have virtual form factor and will be discussed in my future blogs.

- Login to CloudN device itself.

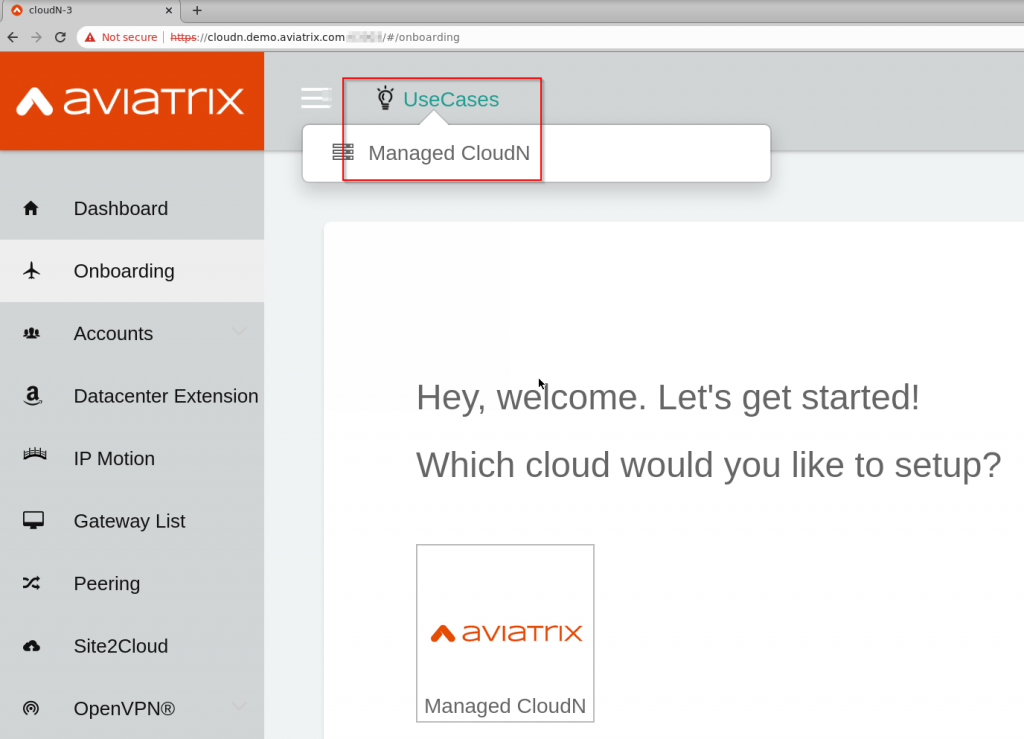

- UseCases -> Managed CloudN

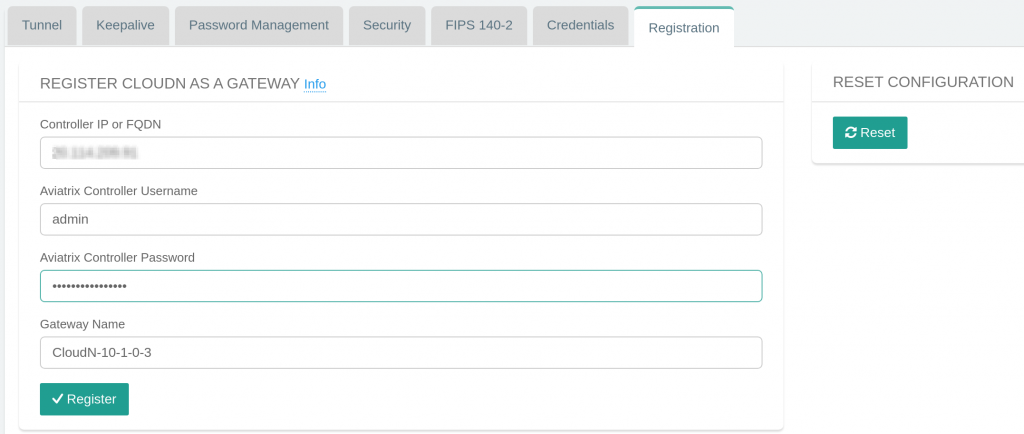

- Provide Aviatrix Controller’s information to register the CloudN

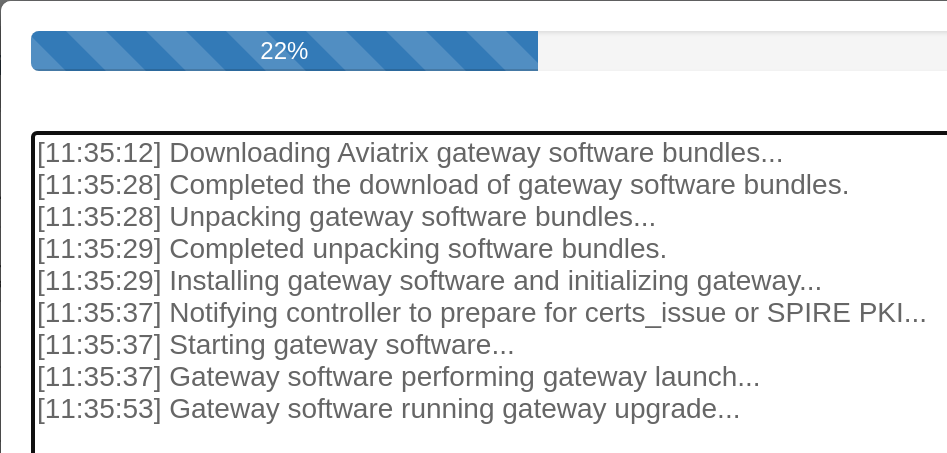

- CloudN perform necessary preparation to register with Aviatrix Controller

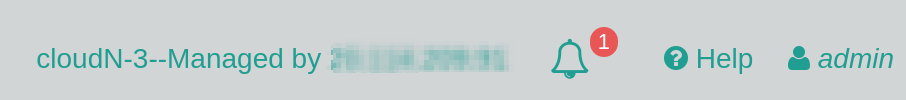

- Upon registration, CloudN top right side shows this CloudN is managed by the Aviatrix Controller you specified

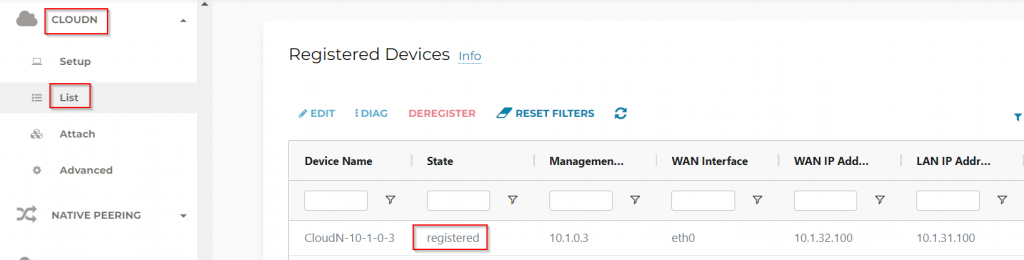

- In Aviatrix Controller -> CloudN -> List, you should see CloudN is registered

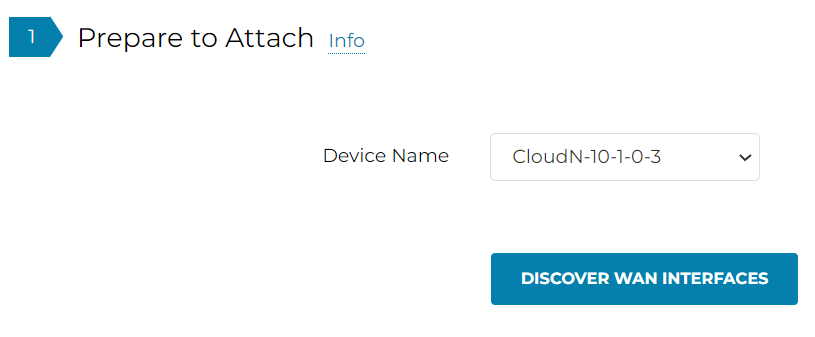

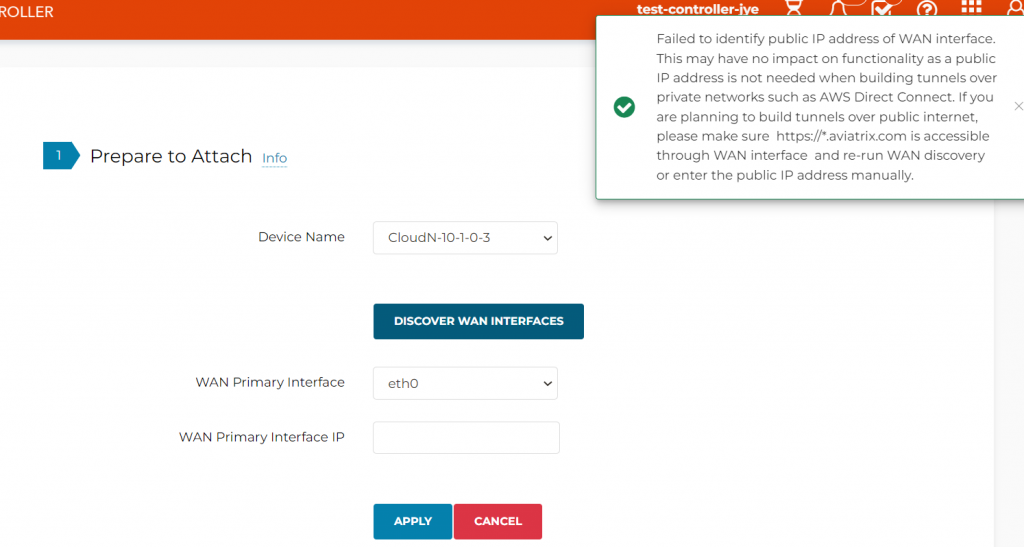

- Detect CloudN WAN IP address if building tunnels over internet, this step is optional if using Private underlay

- In case you are building tunnel over Internet and failed to detect the WAN IP, you may enter it here manually. In following example, we will skip this process as we are building the tunnels over Direct Connect

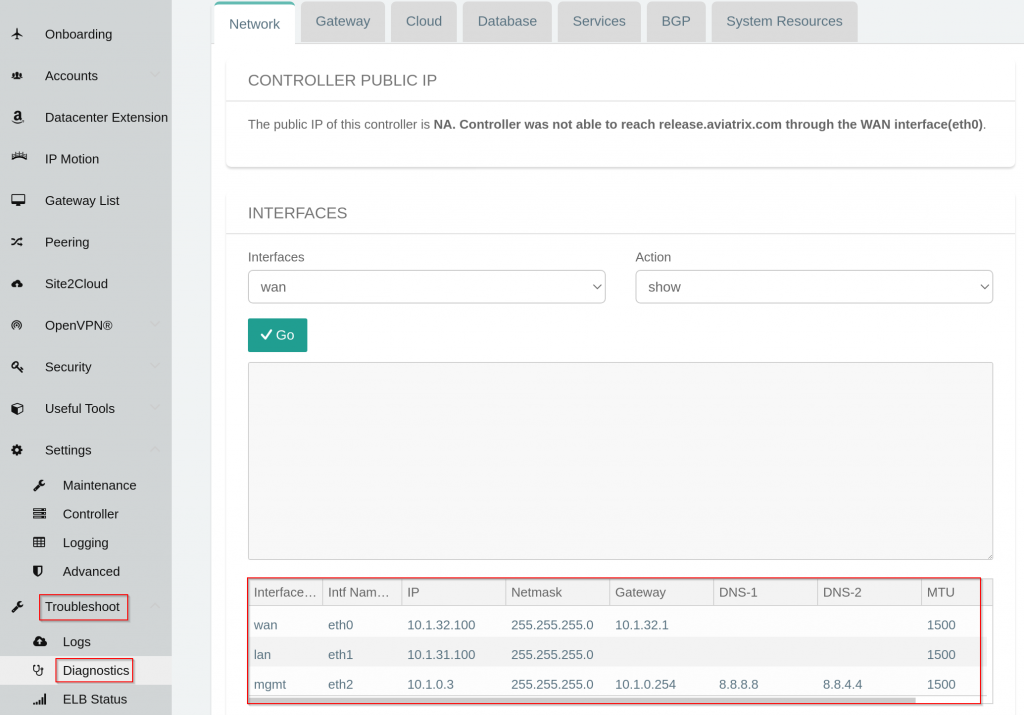

- Review CloudN’s interface configuration, note it’s LAN IP is 10.1.31.100, WAN IP is 10.1.32.100

- Review LAN router configuration, it’s LAN interface have IP: 10.1.31.1

interface GigabitEthernet0/0/1.31

description "cloudN LAN"

encapsulation dot1Q 31

ip address 10.1.31.1 255.255.255.0- Router have BGP neighborhood setup with CloudN’s LAN interface, note CloudN will be assigned ASN: 65003

router bgp 65000

neighbor 10.1.31.100 remote-as 65003

neighbor 10.1.31.100 description cloudN- Important: CloudN uses it’s WAN port to establish IPSec tunnels with Aviatrix Transit Gateways, in previous blog: Option 2 we have an ip prefix-list filter only advertise the loopback to Aviatrix Transit VPC. We need to include the WAN port in the advertisement as well:

ip prefix-list Router-to-DXGW description Advertise Loopback to be underlay

ip prefix-list Router-to-DXGW seq 10 permit 192.168.77.1/32

ip prefix-list Router-to-DXGW seq 20 permit 10.1.32.0/24- Confirm the WAN route is been propagated to Aviatrix Transit VPC route table

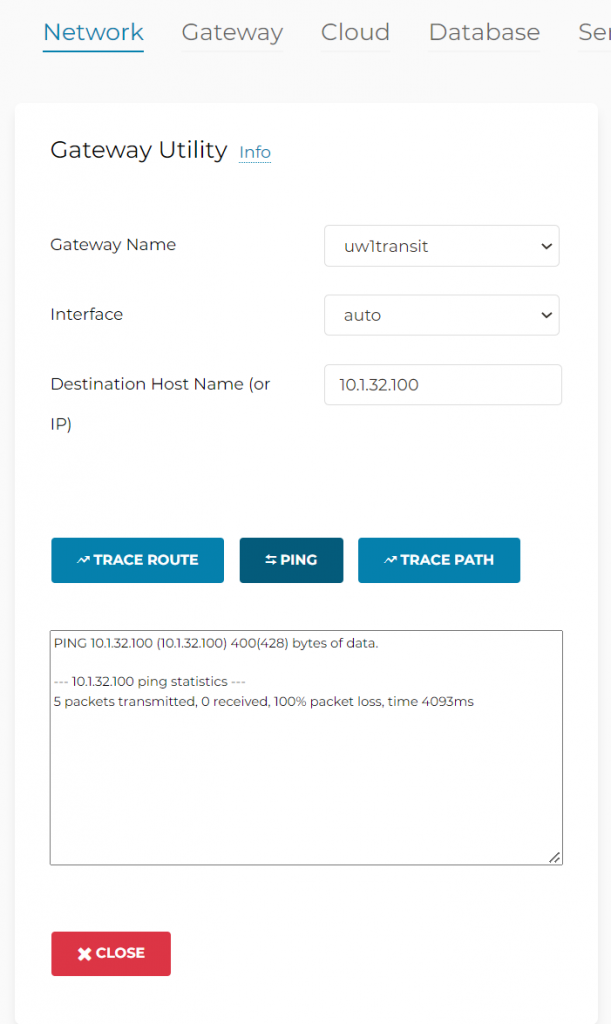

- You maybe temped to ping from Aviatrix Transit Gateway to CloudN WAN port to confirm connectivity at this point, but it will not work

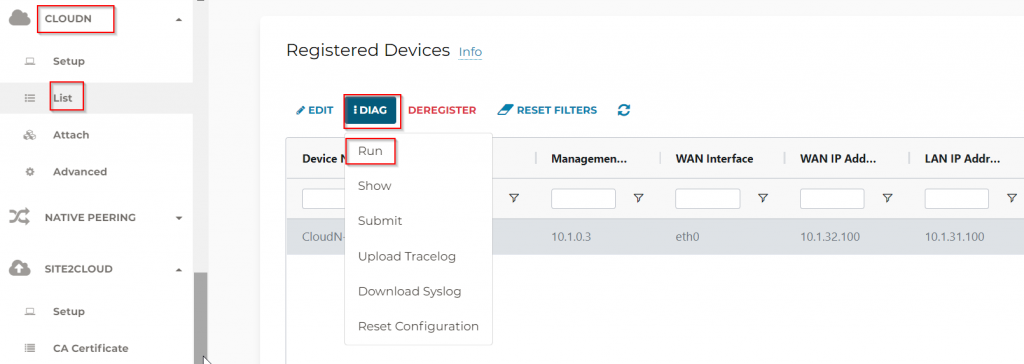

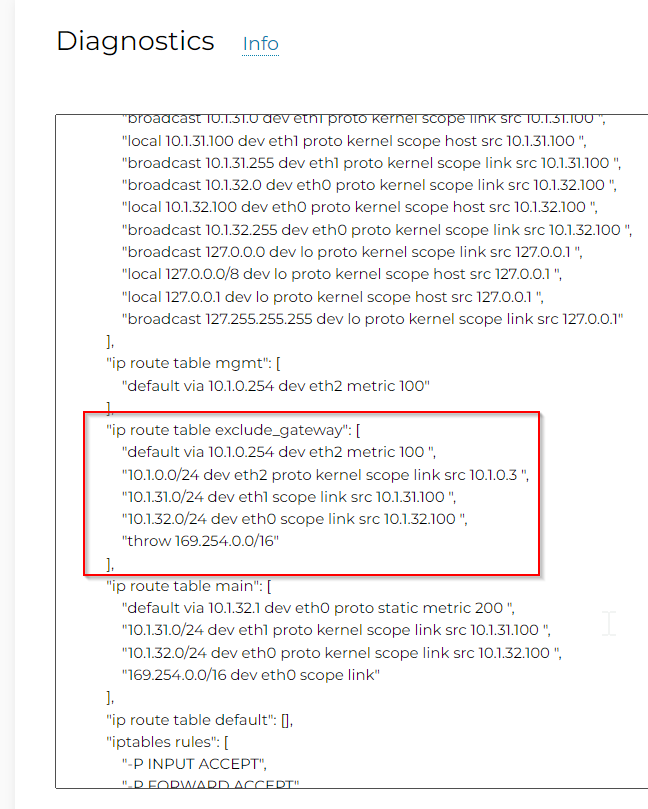

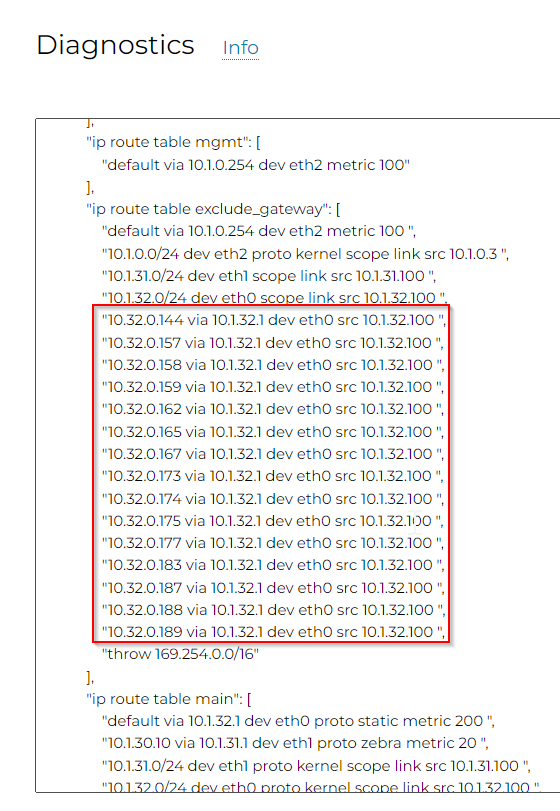

- This is because CloudN doesn’t have return route to Aviatrix Transit Gateway’s LAN IP. To demonstrate this, go to Controller -> CloudN -> List -> Registered Devices -> Select CloudN -> Diag -> Run. After this is completed, follow the same path, click on Diag -> Show

- Any traffic initiated from CloudN device itself is subject to exclude_gateway route table

- Note Aviatrix Transit VPC CIDR in my lab is 10.32.0.0/23, and above exclude_gateway route table doesn’t have anything from this range.

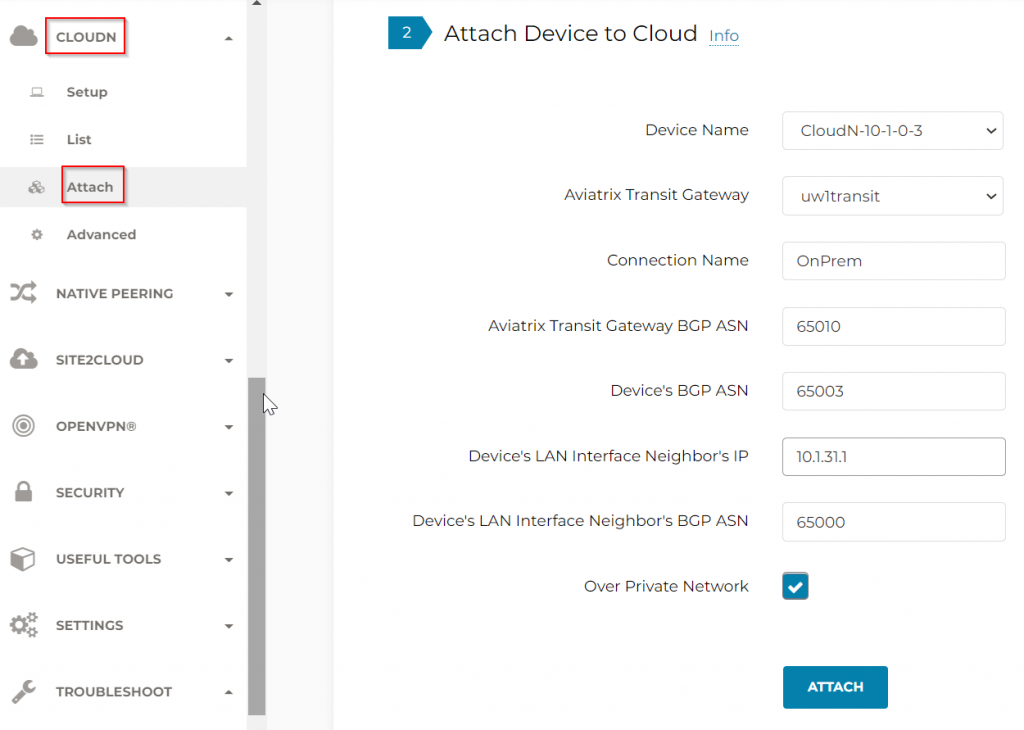

- Let’s see how Aviatrix handles this, in Aviatrix Controller -> CloudN -> Attach. Note: Over Private Network is selected, as we are using a Direct Connect as underlay.

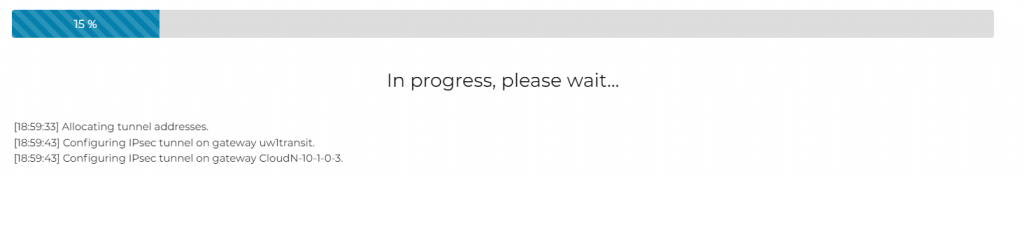

- Wait for the attachment to complete

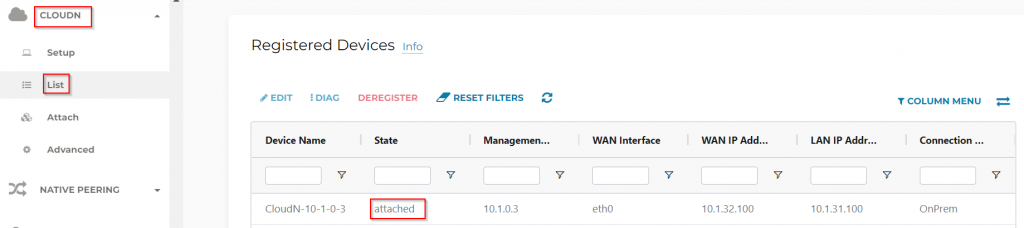

- CloudN -> List -> Registered Devices, the CloudN status should now be attached

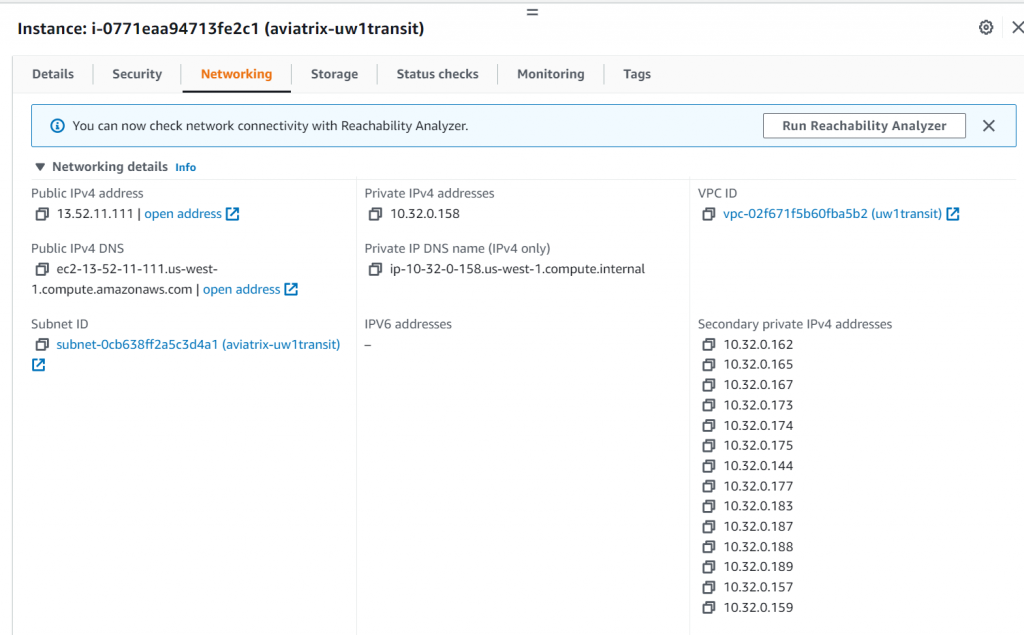

- Controller -> CloudN -> List -> Registered Devices -> Select CloudN -> Diag -> Run. After this is completed, follow the same path, click on Show. We can see now a lot of 10.32.0.x IPs gets added to the exclude_gateway route table

- These IPs are Transit Gateway’s LAN side primary private IP plus secondary private IPs. Aviatrix Controller is aware of these IPs, as it manages Aviatrix Transit Gateways, it makes sense to only include these IPs in the exclude_gateway route table, to build required IPSec tunnels.

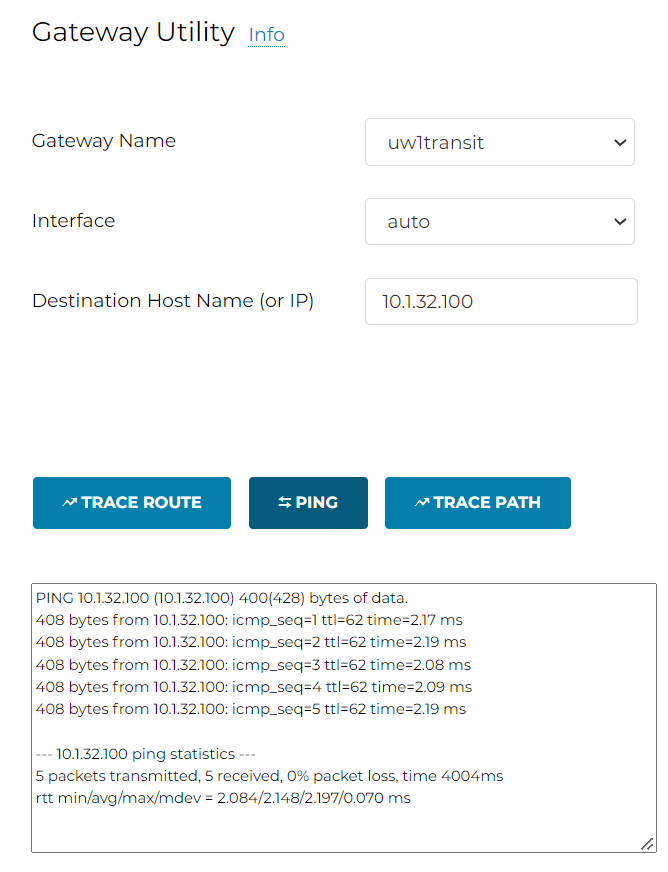

- Now if we try to ping from Aviatrix Transit Gateway to CloudN WAN, it would work

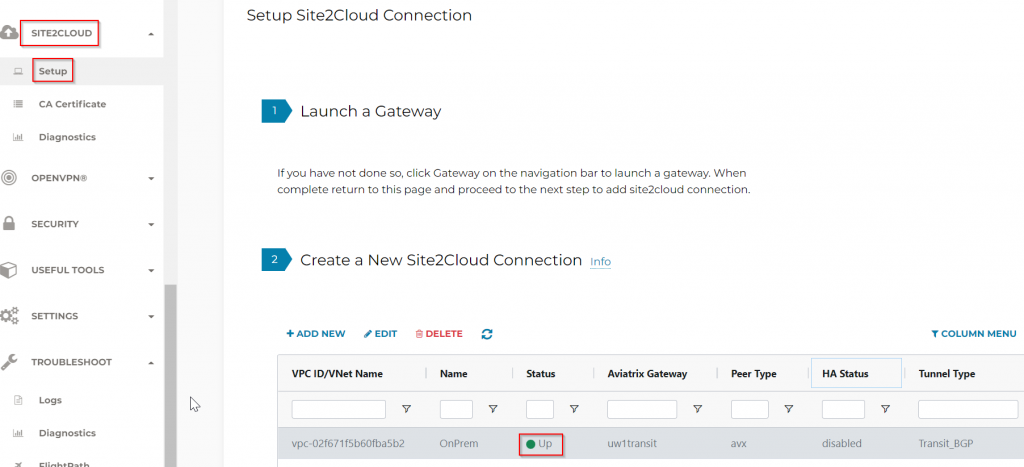

- Site to Cloud Connection should be up

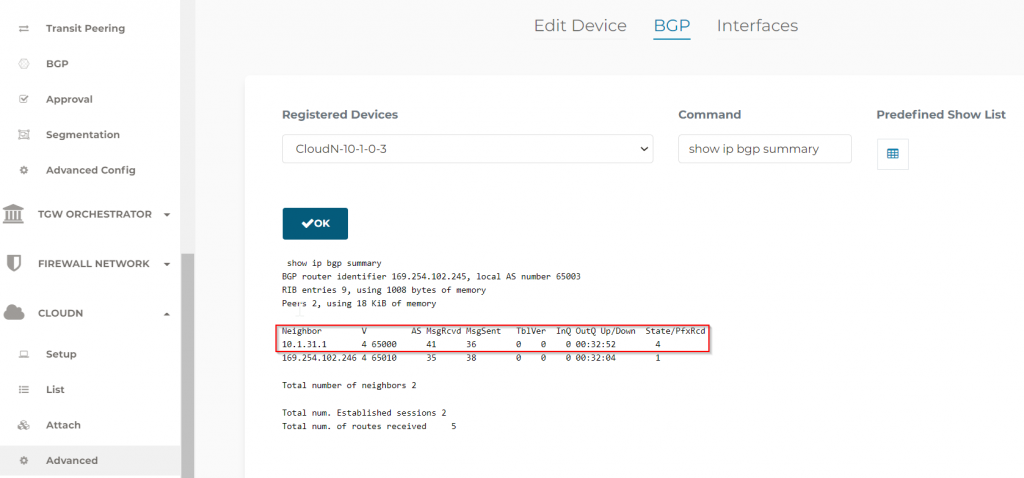

- Controller -> CloudN -> Advanced -> BGP, we can confirm the BGP neighborhood with LAN side router is up.

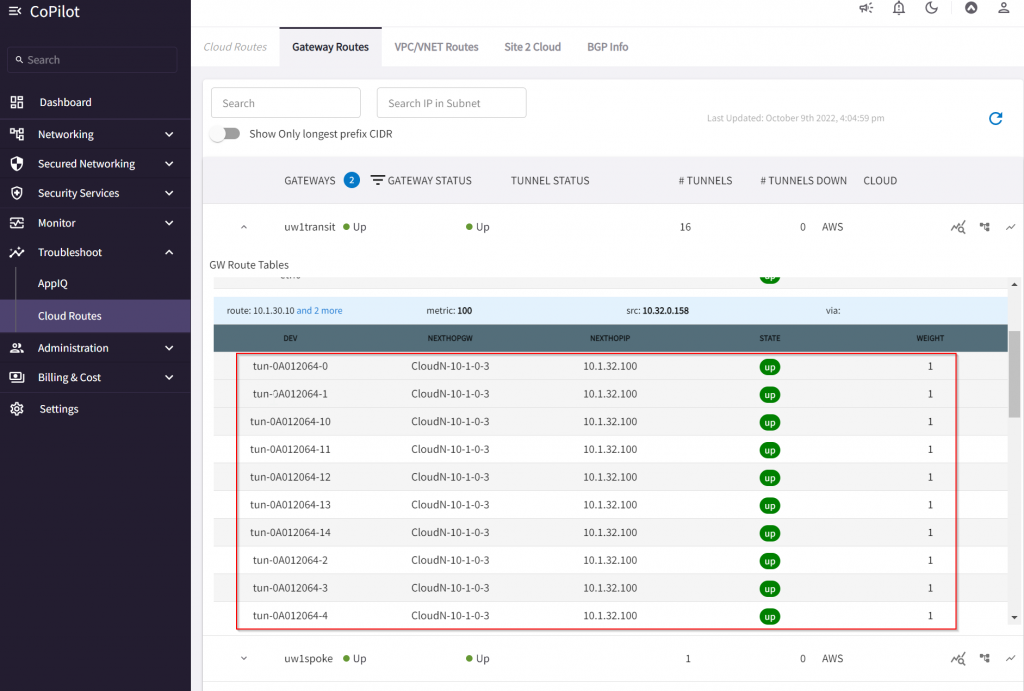

- In CoPilot -> Cloud Routes, we can see multiple tunnels created between CloudN to the Transit.

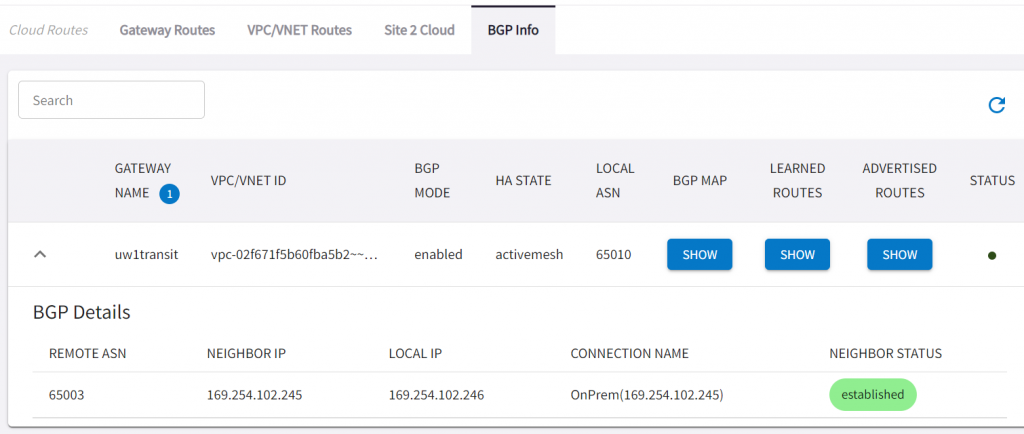

- In CoPilot -> Cloud Route -> BGP Info, we can see BGP session between CloudN and Aviatrix Transit Established via the S2C connection created by CloudN attachment to the Aviatrix Transit.

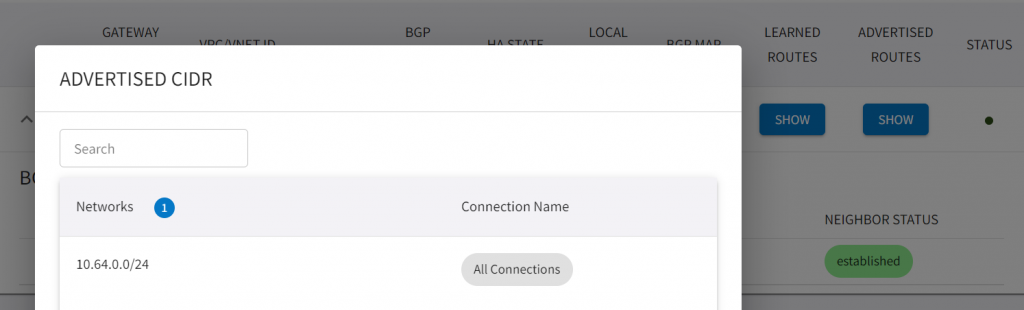

- In Advertised CIDR, we can see the test spoke’s CIDR 10.64.0.0/24 has been advertised towards CloudN

- We can confirm this from LAN side route

#show ip bgp neighbors 10.1.31.100 received-routes

BGP table version is 9, local router ID is 192.168.77.1

Status codes: s suppressed, d damped, h history, * valid, > best, i - internal,

r RIB-failure, S Stale, m multipath, b backup-path, f RT-Filter,

x best-external, a additional-path, c RIB-compressed,

t secondary path, L long-lived-stale,

Origin codes: i - IGP, e - EGP, ? - incomplete

RPKI validation codes: V valid, I invalid, N Not found

Network Next Hop Metric LocPrf Weight Path

*> 10.64.0.0/24 10.1.31.100 0 65003 65010 i

Total number of prefixes 1 After thoughts

Aviatrix CloudN made it really easy to:

- Automatically create and maintain multiple IPSec Tunnels towards Aviatrix Transit Gateways utilizing private connectivity’s.

- Provide point to point encryption with line rate of speed from on-premise to the cloud.

- Overcome CSP route prefixes limits

- Connect to multiple Aviatrix transits via a single CloudN, traffic in between CSPs can hairpin via CloudN.

Some considerations:

- CloudN is capable of pushing aggregated throughput of 25Gbps per device. However, each IPSec tunnel is still limited to 1.25Gbps, application must be able to run multiple sessions to take the benefit of aggregated throughput.

- CloudN still depends on third party router/firewall to provide underlay connectivity, and the connectivity is treated as an external Site to Cloud connection.

- Beside of connecting via private connections such as Direct Connect, there are many other use cases where remote offices, branches, retail offices that requires secure connectivity to the cloud via Internet

- Edge 1.0 is virtual form factor of CloudN with zero touch provisioning capability. It is still treated as external site to cloud BGP device

- Edge 2.0 acts like an Aviatrix Spoke Gateway, only exchange BGP with on-premise devices. It will have many of the features that an Aviatrix Spoke have. Think of pushes the cloud boundary towards on-premise, and have either virtual or physical form factor. Stay tuned for my future blog for these new developments.