When you connect a third party Network Virtual Appliance (NVA), such as Firewall, SDWan, Load Balancers, Routers, Proxies etc into Azure, you need to redirect network traffic towards these NVAs for data processing. In the past, this often resulted in manual route table entries to be created and maintained, different route table entries need to be entered in source, destination, NVAs, as well as potently in the middle of the data path.

In Azure, these static entries are called User Defined Routing (UDR), where you specify the target IP range, target next hop device type, and next hope IP address. A simple use case of UDR is shown below where we have two vNets that connecting via a NVA in a hub vNet. Now imagine you have hundreds of vNets and your workload constantly changes, these manually entries are error prone, inflexible and super difficult to troubleshoot. While cloud is promising agile and flexible, these manual entries is counter intuitive and slows everything down.

Humans are not designed to process these IP addresses, subnet masks, and destination addresses efficiently at large scale, and we should leave these tasks to computer system to automatically handles routing entries. If you have been following Aviatrix for a while, you will understand that Aviatrix handles these tasks extremally well for multi-cloud environment. It’s very nice to see that Microsoft is also listening to customer’s need, and developed route server to help with route management with NVAs.

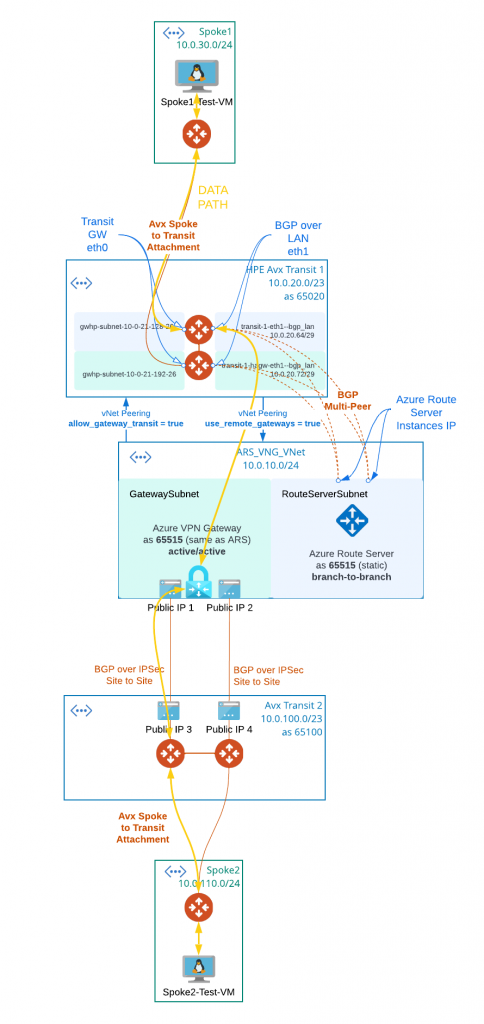

The introduction of Azure Route Server has created a lot of interesting new designs. In this blog post, I will lead you through a lab that simulate express route connectivity from on-prem, and integrate with Aviatrix using Route Server and Azure VPN gateway.

Here is the Terraform module to create following lab environment:

Steps taken:

- Prepare Aviatrix Transit and Spoke in the Cloud

- Created Aviatrix Transit1

- Transit1 must enable BGP over LAN feature during the creation.

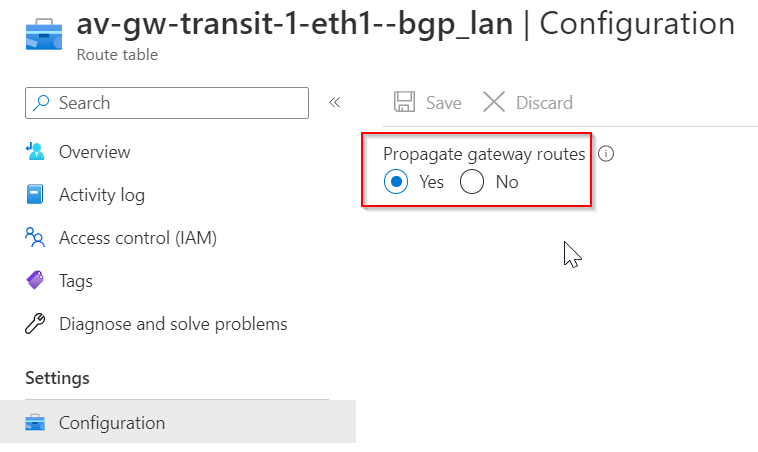

- Transit1 must enable High Performance Encryption (HPE) to support BGP multi-peer integration with Azure Route Server, the expectation is that Express Route will be used with this type of integration. And Aviatrix only enables gateway route propagation on the routes that’s attached to BGP over LAN subnets. All other routes in the transit1 vNet have gateway propagation disabled when HPE is enabled.

- Created Aviatrix Spoke1 to simulate workload in Azure

- A Linux VM is deployed in Spoke1

- Attached Spoke1 to Transit1

- Created Aviatrix Transit1

- Prepare Aviatrix Transit and Spoke to simulate On-premise environment

- Created Aviatrix Transit2

- Transit2 is to simulate on-premise network connecting to Azure via Azure VPN Gateway

- Created Aviatrix Spoke2 to simulate workload in On-premise environment

- A Linux VM is deployed in Spoke2

- Attached Spoke2 to Transit2

- Created Aviatrix Transit2

- Prepare Virtual Network ARS_VNG_VNet

- A RouteServerSubnet is created for Azure Route Server (min /27)

- Azure Route Server (ARS) is deployed in ARS_VNG_VNet/RouteServerSubnet

- Azure Route Server have static ASN (as of today, this cannot be changed) of 65515

- Azure Route Server have branch-to-branch enabled to exchange iBGP with Azure VPN Gateway

- A GatewaySubnet is created for Azure VPN Gateway (min /27)

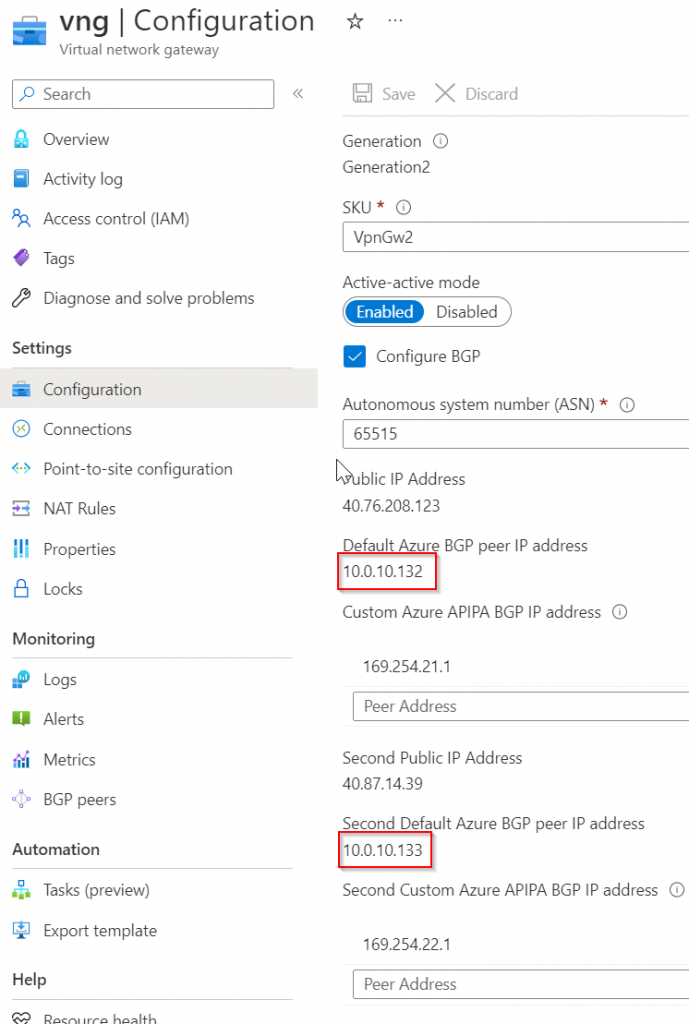

- Azure VPN Gateway (VNG) is deployed in ARS_VNG_VNet/GatewaySubnet with two public IPs attached.Azure VPN Gateway assign the same ASN 65515 to form iBGP with Azure Route Server

- Azure VPN Gateway have active-active feature enabled

- A RouteServerSubnet is created for Azure Route Server (min /27)

- Prepare vNet peering between ARS_VNG_VNet and Transit1 vNet

- The vNet peering created necessary underlay for Aviatrix Transit Gateway to reach Azure Route Servers

- From Transit1 vNet created a vNet peering with ARS_VNG_VNet, and enabled use_remote_gateways. This will enable VNG to propagate learned route to Transit1 vNet, so Transit1 vNet will use VNG to reach subnets connected via VNG

- From ARS_VNG_VNet vNet created a vNet peering with Transit1 vNet, and enabled allow_gateway_transit.

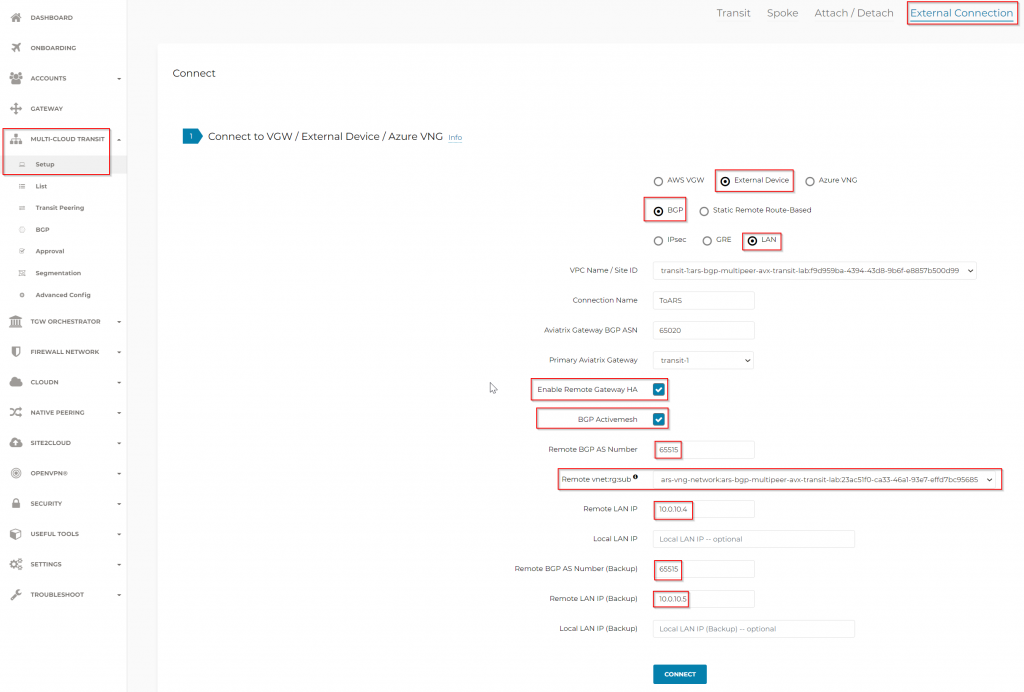

- Create BGP over LAN multi-peer connection between Aviatrix Transit1 to ARS

- Enable Remote Gateway HA must be selected

- BGP Activemesh must be selected

- Remote vnet:rg:sub is only available after the vNet peering is completed

- Specify Azure Route Server asn: 65515 and the two ARS IPs.

- Note: Azure Route Server is a route reflector, it doesn’t process any data, and is not in data path.

- Azure Route Server take the routes learned from the BGP peering with Aviatrix Transit1 and send to VNG, when branch-to-branch is enabled.

- Azure Route Server take the route learned from VNG, and send to Aviatrix Transit1, when branch-to-branch is enabled

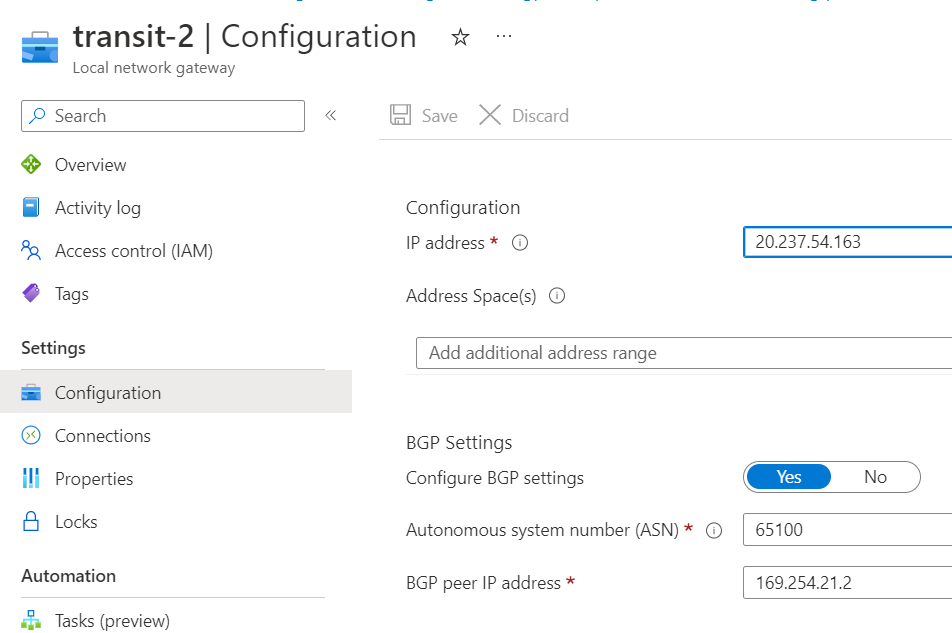

- Create BGP over IPSec between Aviatrix Transit2 to VNG

- Reference my last blog post BGP over IPSec between Aviatrix Transit and Azure VPN Gateway for details

Validate connectivity

Terrarform would output following:

spoke1-test-vm = {

"instance_id" = "/subscriptions/<guid>/resourceGroups/ars-bgp-multipeer-avx-transit-lab/providers/Microsoft.Compute/virtualMachines/spoke1-test-vm"

"private_ip" = "10.0.30.4"

"public_ip" = "20.168.201.13"

"ssh" = "ssh -i az-key-pair.pem [email protected]"

}

spoke2-test-vm = {

"instance_id" = "/subscriptions/<guid>/resourceGroups/ars-bgp-multipeer-avx-transit-lab/providers/Microsoft.Compute/virtualMachines/spoke2-test-vm"

"private_ip" = "10.0.110.4"

"public_ip" = "40.121.159.109"

"ssh" = "ssh -i az-key-pair.pem [email protected]"

}After connecting to spoke1-test-vm, try ping and curl spoke2-test-vm

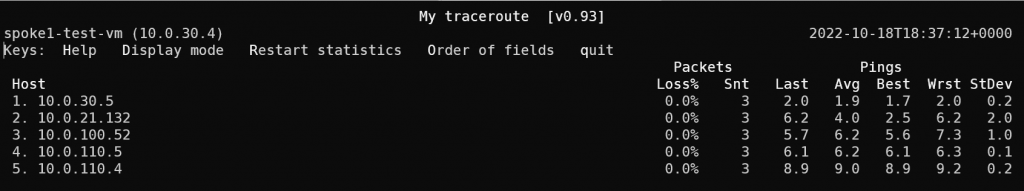

Running mtr (my trace route) against spoke2-test-vm

It shows a very clear path:

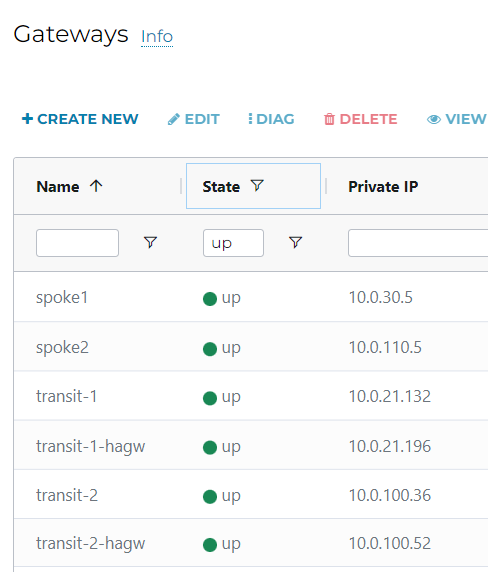

10.0.30.5 is spoke1

10.0.21.132 is transit-1

10.0.100.52 is transit-2-hagw

10.0.110.5 is spoke2

10.0.110.4 is spoke2-test-vm

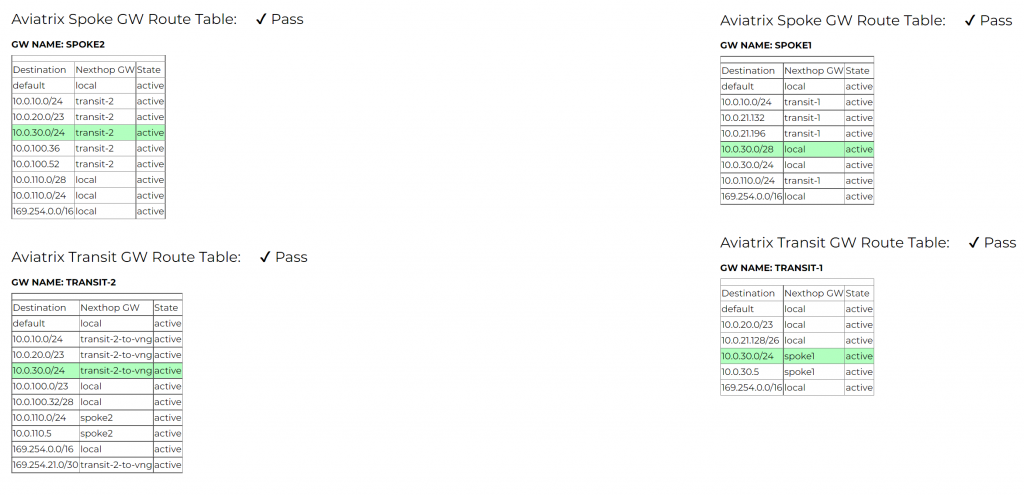

Deeper look at route tables

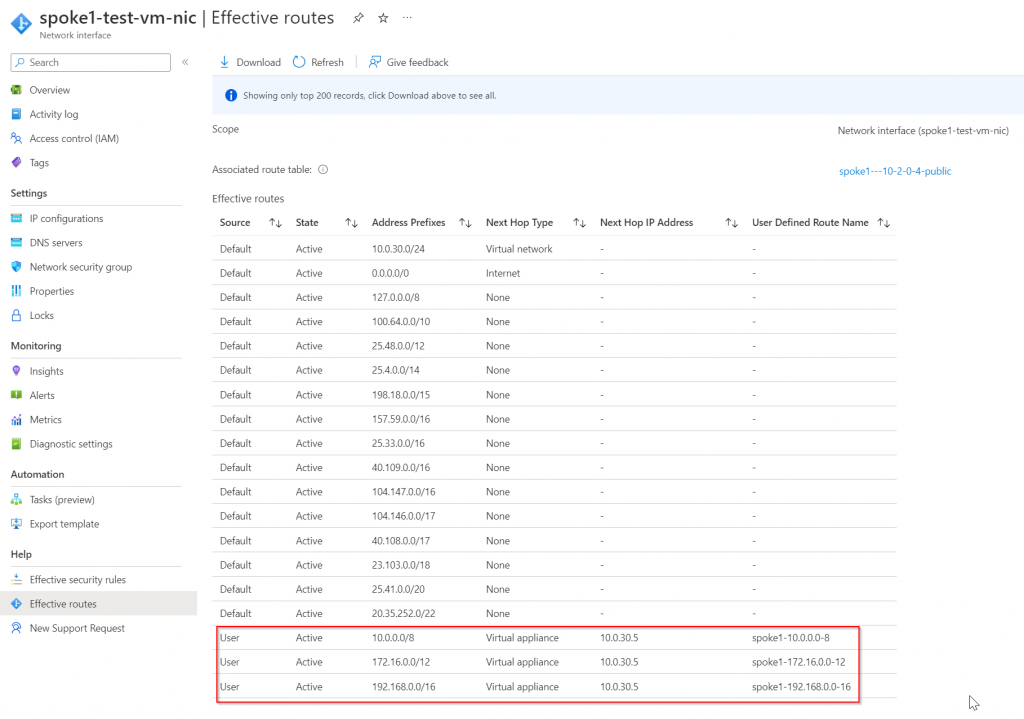

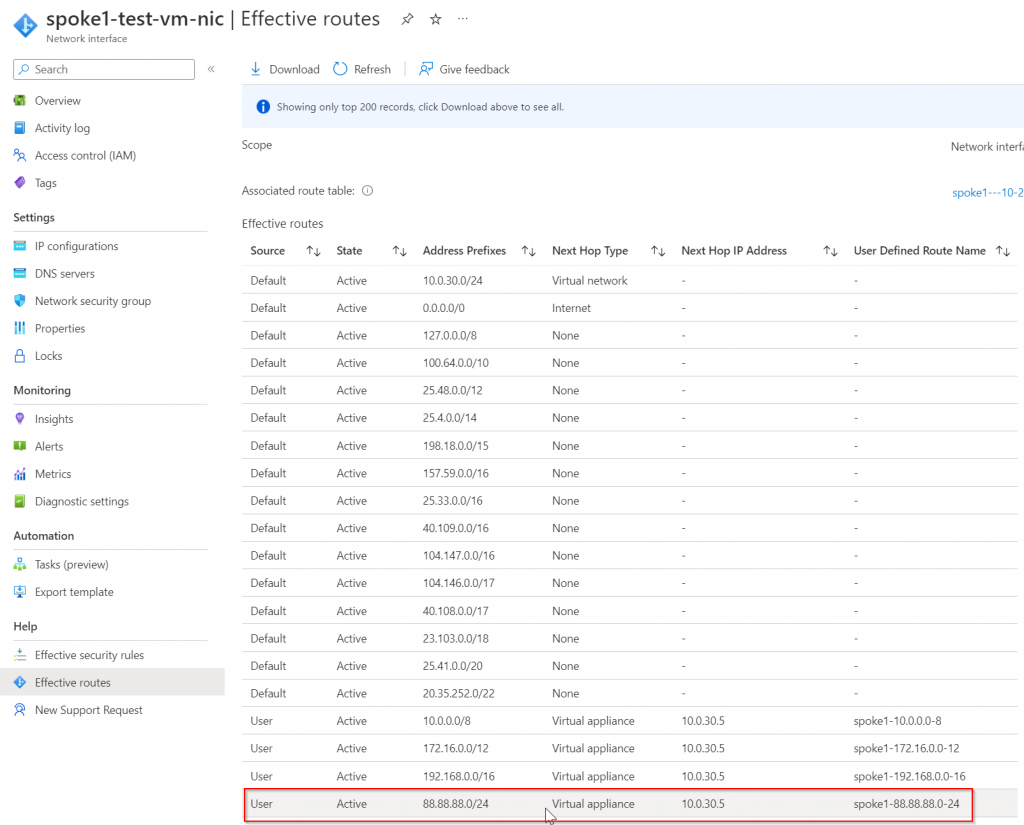

Effective route of spoke1-test-vm-nic, note Aviatrix manages and inserts RFC1918 routes (10.0.0.0/8, 172.16.0.0/12 and 192.168.0.0/16 by default) to attract traffic towards Spoke1 gateway

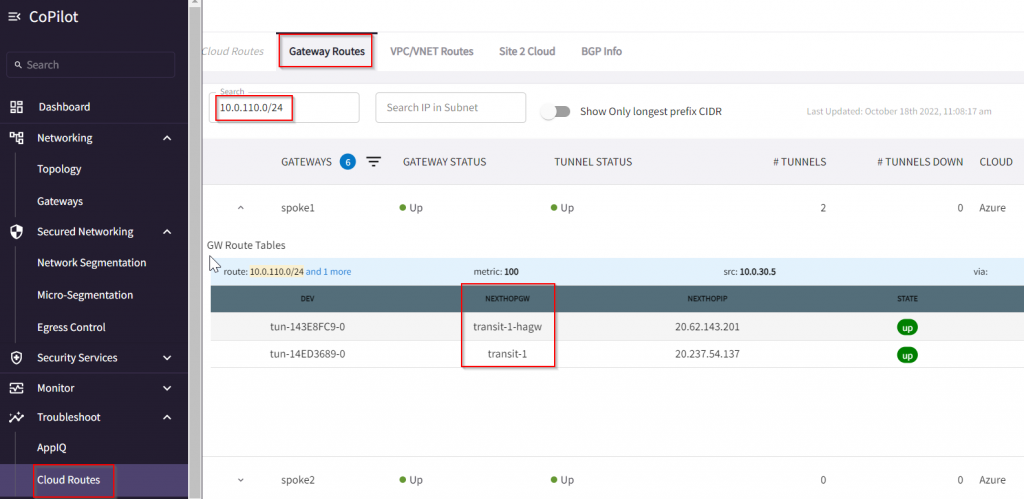

Once the traffic reaches Spoke1 gateway, we can search Spoke1’s route table of 10.0.110.0/24 and we can see it’s next hop is transit-1 and transit-1-hagw via the IPSec tunnels between spoke1 and transit-1/transit-1-hagw

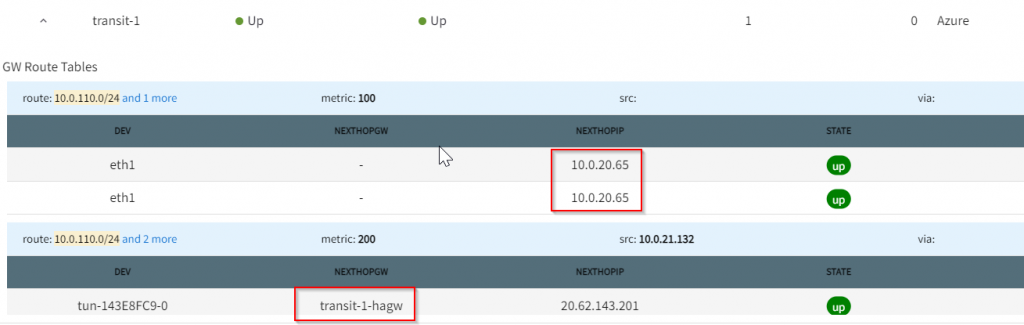

On Transit-1 gateway route, you can see 10.0.110.0/24 has next hop of 10.0.20.65 and transit-1-hagw, the later is high availability link between Aviatrix Primary and HA gateways. I will explain this in future blog talking about Active Mesh and High Performance Encryption.

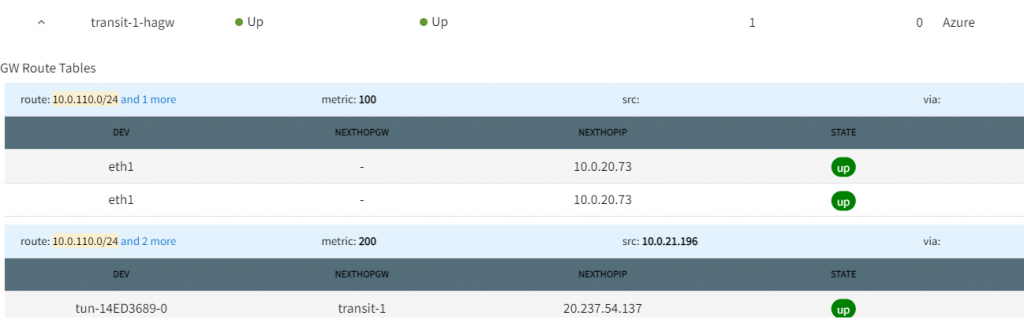

On Transit-1-hagw gateway route, you can see 10.0.110.0/24 has next hop of 10.0.20.73 and transit-1, the later is high availability link between Aviatrix Primary and HA gateways.

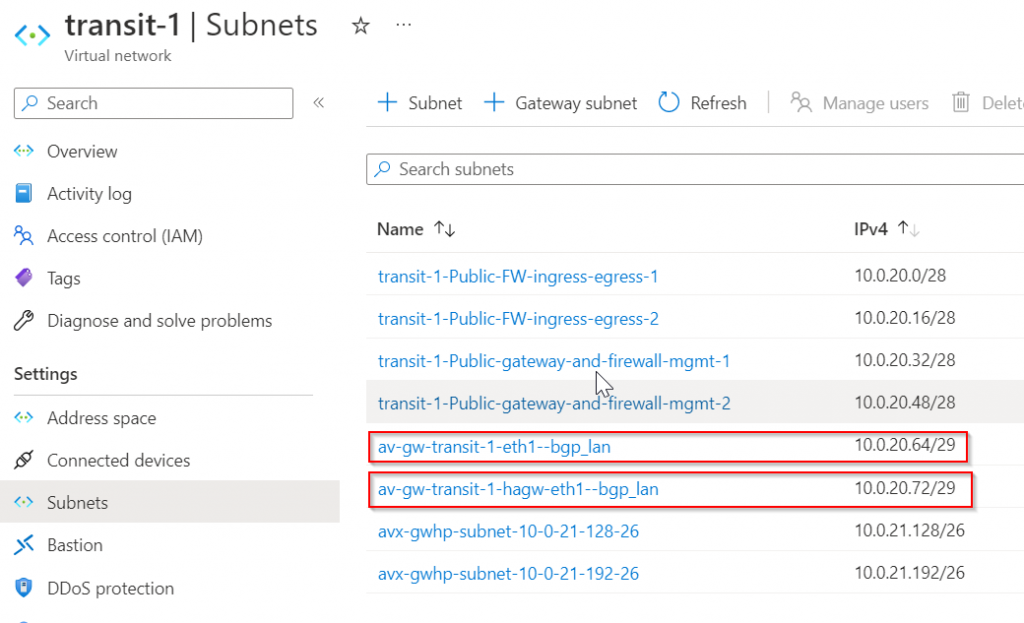

In below picture, you can see two subnets:

- av-gw-transit-1-eth1-bgp_lan 10.0.20.64/29

- this subnet’s default gateway is: 10.0.20.65

- transit-1’s BGP over LAN interface eth1 is connected.

- av-gw-transit-1-hagw-eth1-bgp_lan 10.0.20.72/29

- this subnet’s default gateway is: 10.0.20.73

- transit1-hagw’s BGP over LAN interface eth1 is connected.

Look at the route table of these two BGP over LAN subnets, both are managed by Aviatrix. Aviatrix enables Propagate gateway routes *only* on the routes that’s connected to BGP over LAN subnets

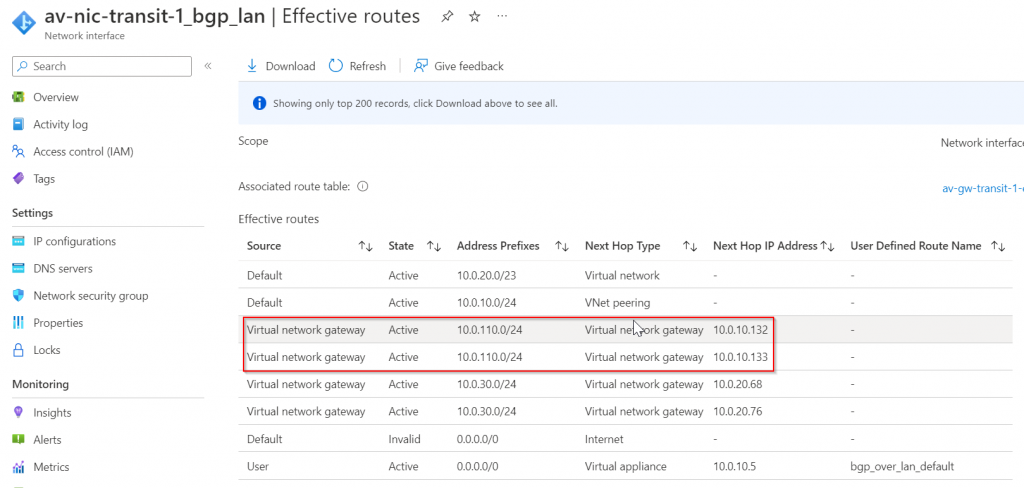

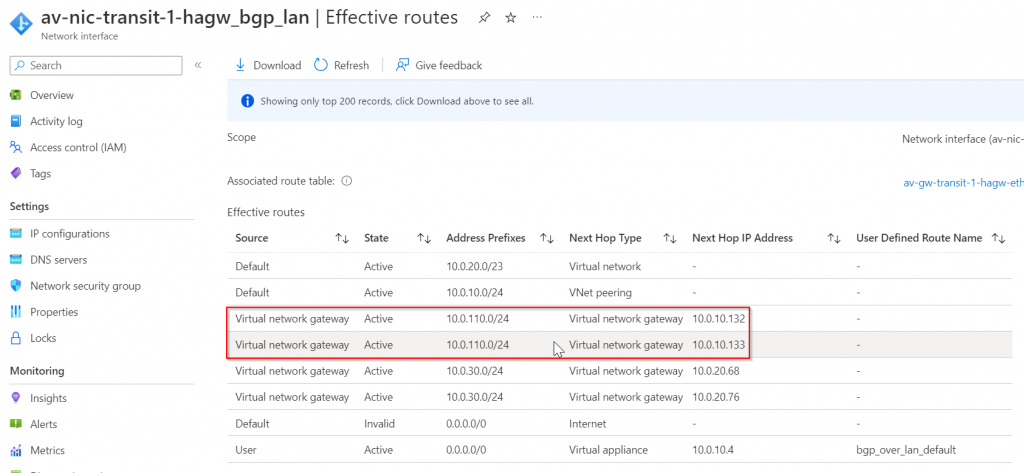

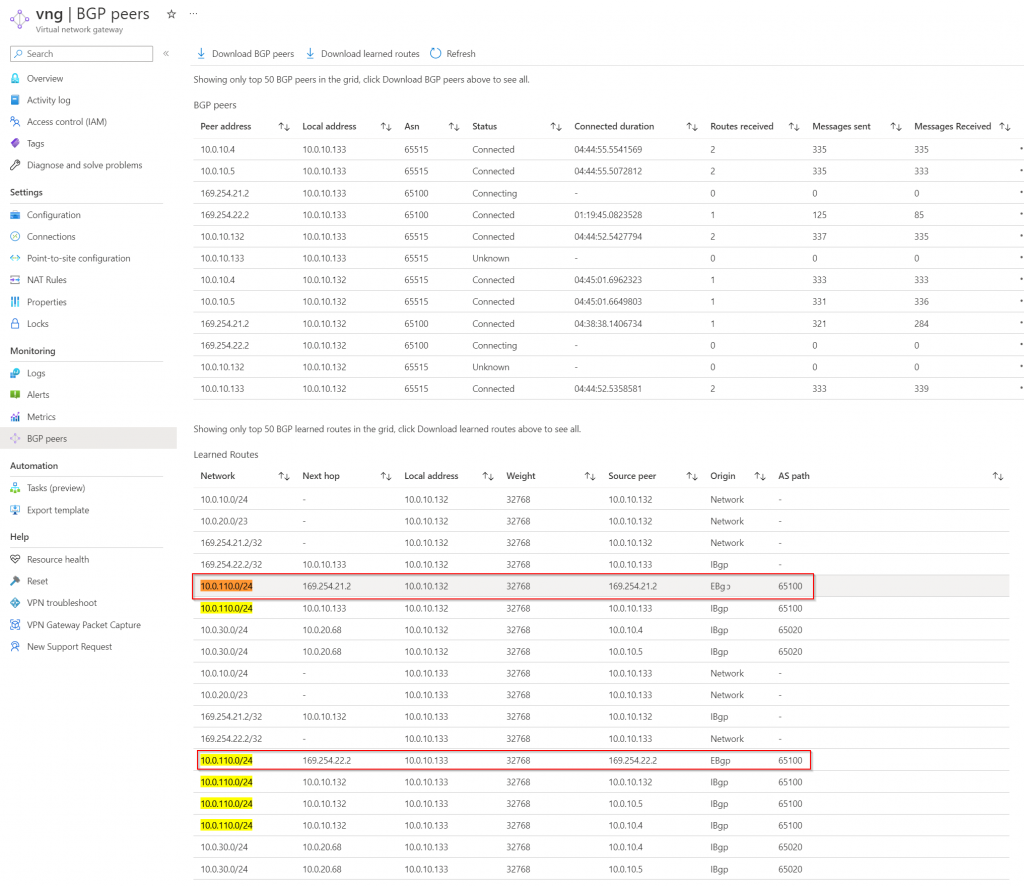

The route tables have two entries where 10.0.110.0/24 pointing to 10.0.10.132 and 10.0.10.133

10.0.10.132 and 10.0.10.133 belong to Azure VPN Gateway

In VGW, we can see 10.0.110.0/24 of eBGP is pointing to the tunneling interface

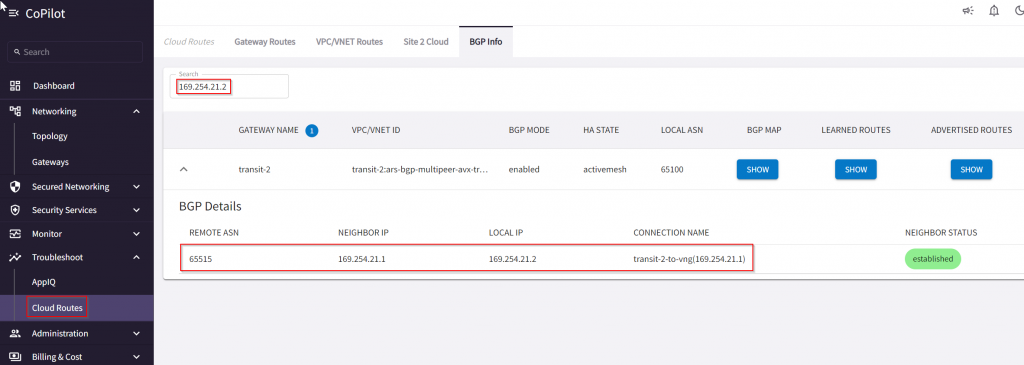

To find out 169.254.21.2 and 169.265.22.2 peer, it requires a bit drilling on Azure Side -> VNG -> Connections -> Local Network Gateway

Easy on Aviatrix CoPilot:

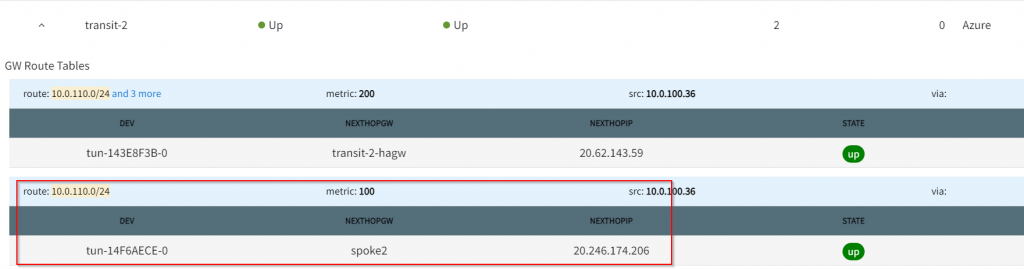

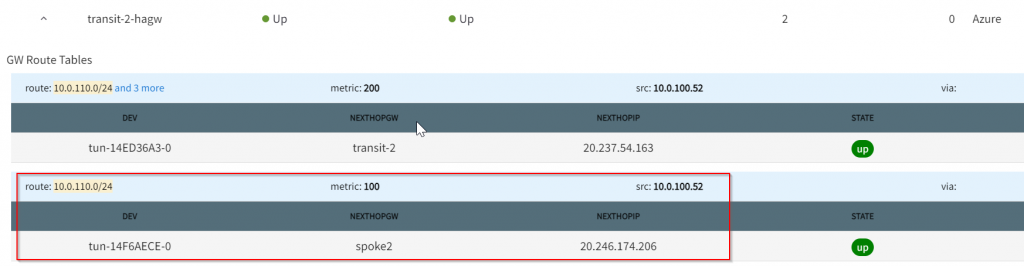

Now that we know next hop is Transit2, look at it’s gateway route table, and we can see 10.0.110.0/24 points to spoke2

Smarter troubleshooting?

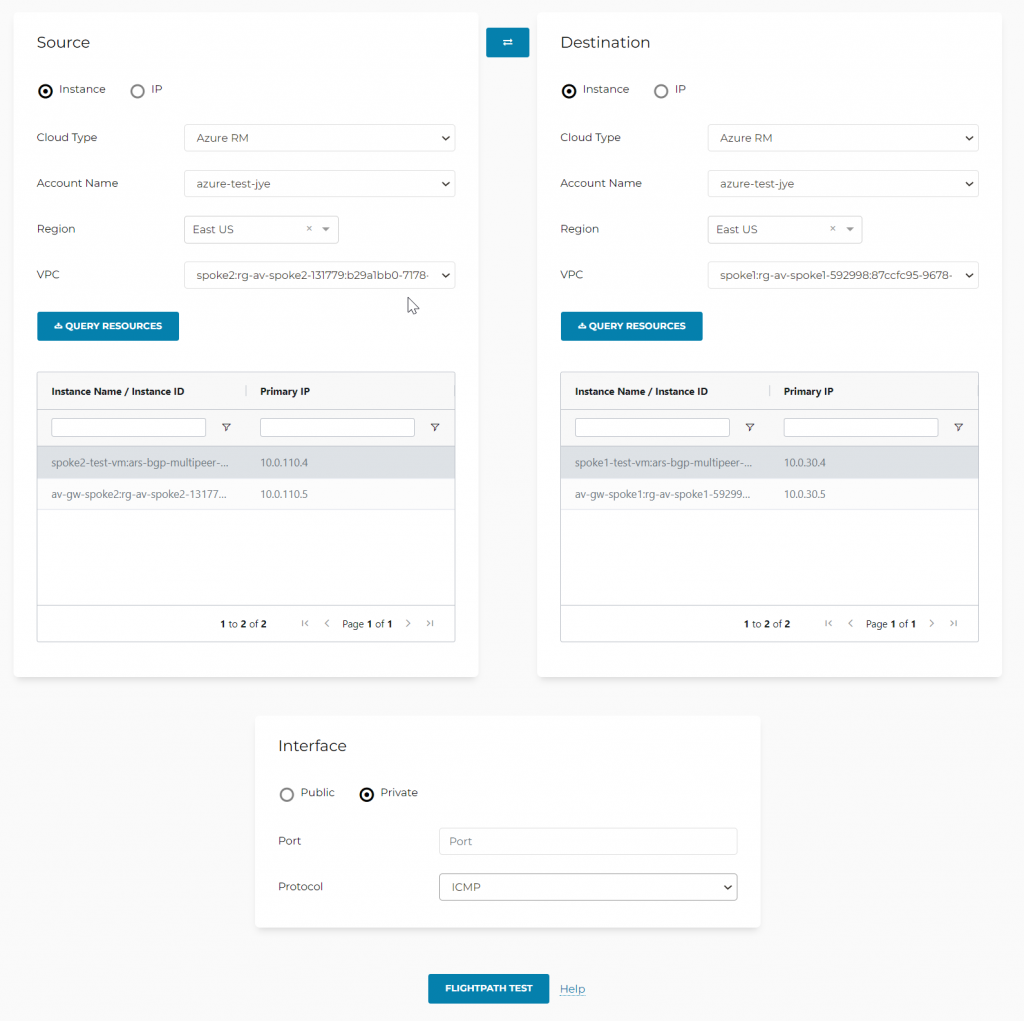

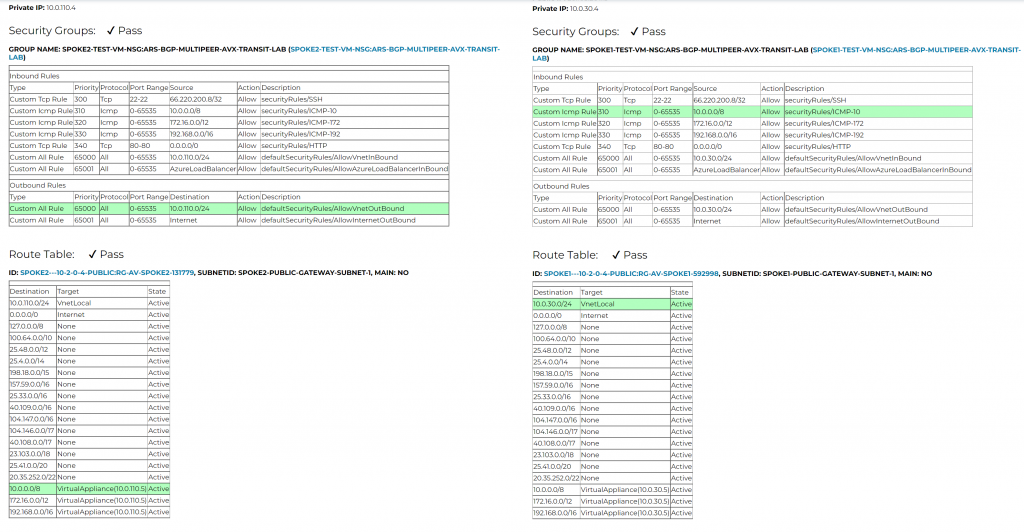

Let’s check reverse path, this time we try out Aviatrix Flight Path

Oh yeah, route table isn’t the only source of networking issues! Have we checked Network Security Group as well? It’s nice that Aviatrix checks all that and highlights match rules for you.

What happens if on-premise route table changes?

Let’s try to change the subnet prefixes from on-prem and see what happens. We can create another spoke3 with it’s own vNet CIDR and attach to Transit2, and Transit2 will then forward spoke3’s CIDR to VNG, which will be send to Azure Route Server, then to Transit1

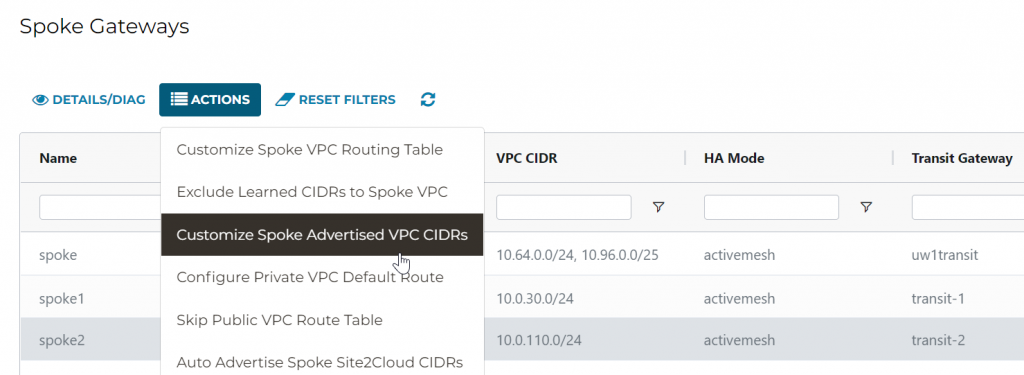

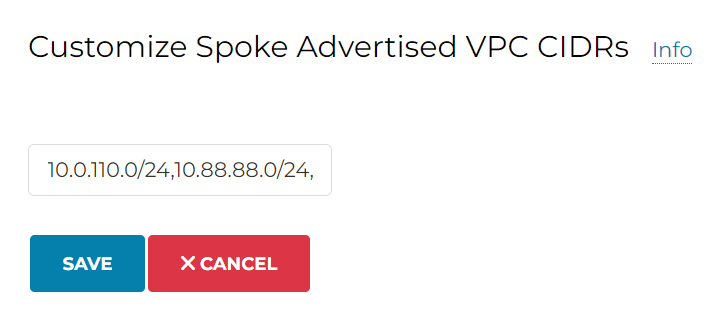

In Aviatrix Spoke, we have this feature: Customize Spoke Advertised VPC CIDRs, that we can advertise customized CIDR into Aviatrix Transit.

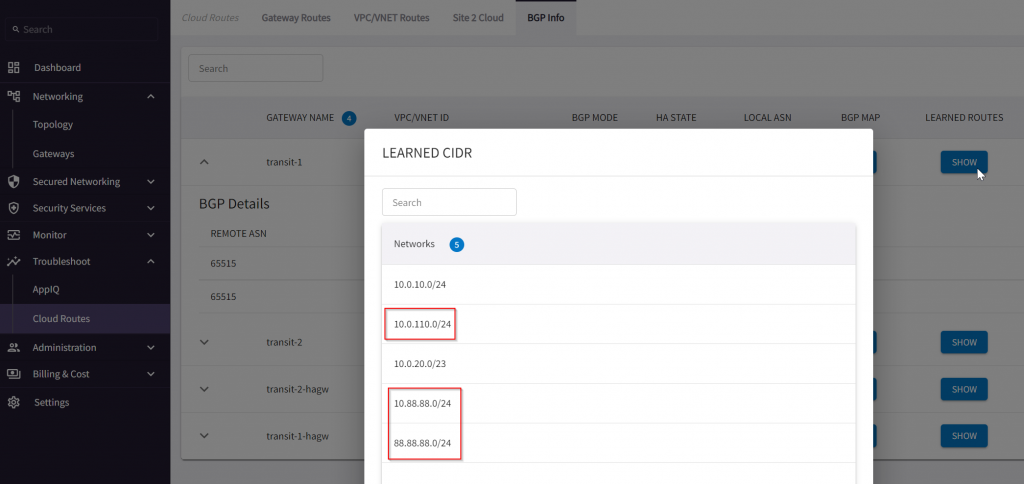

Enter: 10.0.110.0/24,10.88.88.0/24,88.88.88.0/24, note the last one isn’t part of RFC1918 range

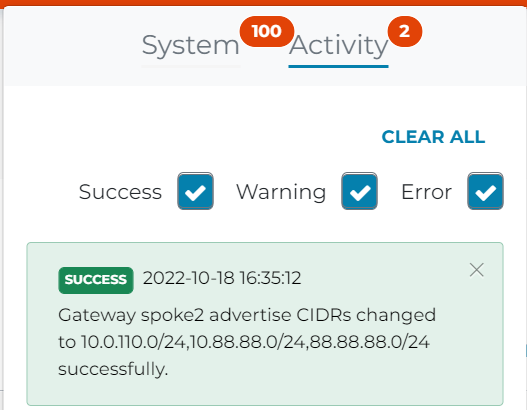

In Activity log, it shows advertise CIDR changed successfully.

Let’s check Transit1 learned CIDR

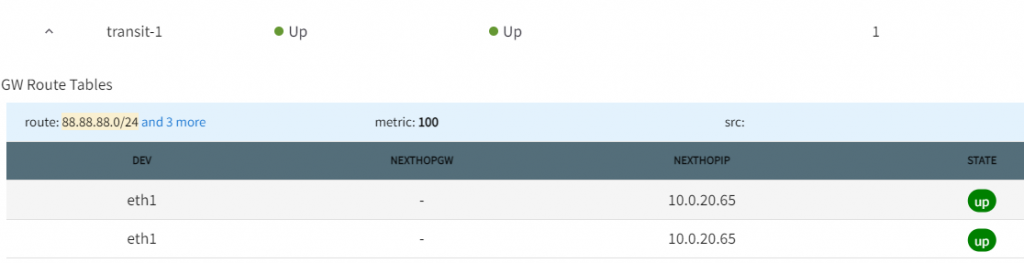

Transit1 gateway routes, searched for 88.88.88.0/24, shows point to 10.0.20.65, if you recall, this is the subnet default gateway for Aviatrix Transit1 BGP over LAN interface

Spoke1 gateway routes, searched for 88.88.88.0/24, shows pointing to transit1 and transit1-hagw

On spoke1-test-vm-nic effective route, we can see Aviatrix programs 88.88.88.0/24 point to spoke1 gateway automatically.

Sweet 🙂

After thoughts

- Have a system intelligently automatics routing is a necessity in enterprise networking.

- Don’t build critical workloads running in the cloud without insight, it’s like running blindfolded with a knife.

- Azure Route Server is a step forward to enable enterprise with dynamic routing, but there are some constrains:

- Number of routes each BGP peer can advertise to Azure Route Server: 1000

- Number of routes that Azure Route Server can advertise to ExpressRoute or VPN gateway: 200

- Number of BGP peers supported: 8 Reference Link

- One vNet can deploy one one Azure Route Server Reference Link

- When you create or delete an Azure Route Server from a virtual network that contains a Virtual Network Gateway (ExpressRoute or VPN), expect downtime until the operation complete. Reference Link

- Cost: $0.45USD/hour or $324 USD per month, and for a service that’s not in data path, it’s not cheap.

- Stay tunned for my future blogs that will cover how to connect into Aviatrix Transit using Express Route.