In the last blog post: Learning GCP Interconnect: Step-by-Step Guide for Configuring BGP with ISR and Cloud Router, I have shown steps of creating Interconnect VLAN attachment to existing VPC, as well as how to configure Cloud Routers and VPC peerings to establish connectivity from on-prem to GCP spoke VPC. You may have noticed a few feature difference amongst AWS Direct Connect, Azure Express Route and GCP Interconnect, which leads to different architecture.

In this blog post, I will show you how to connect Aviatrix Edge 2.0 to Aviatrix Transit in GCP, using Interconnect as underlay.

Interconnect as underlay

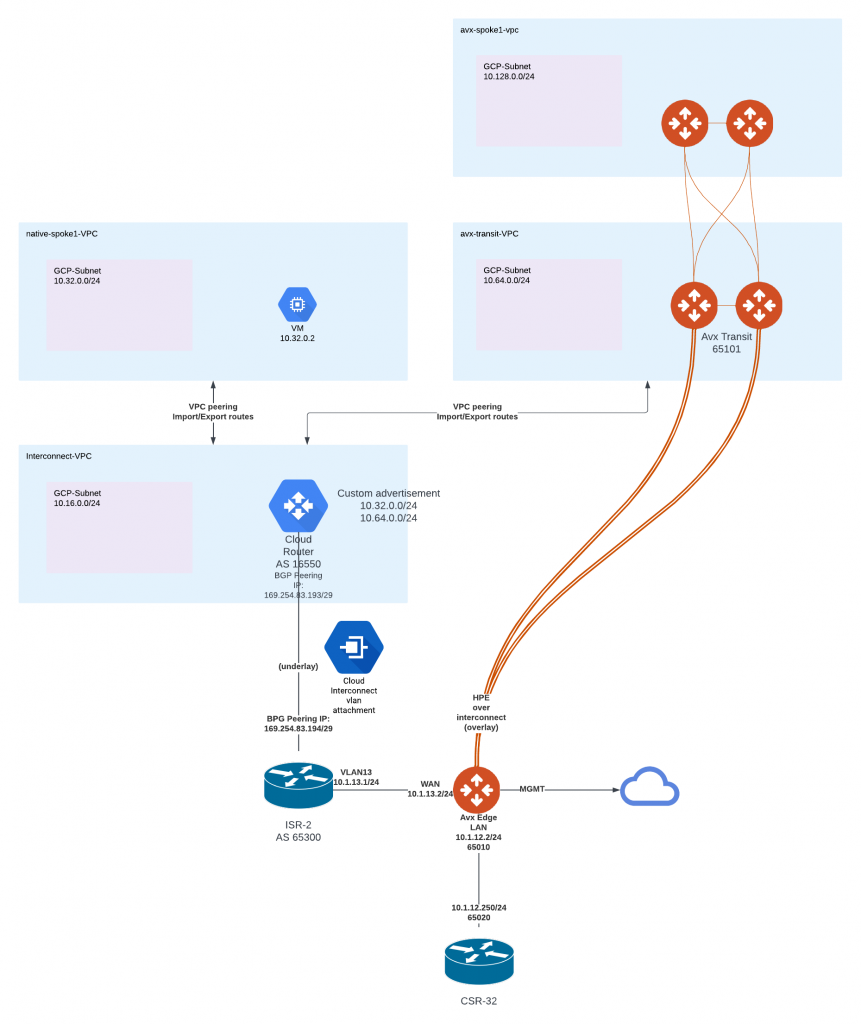

- In above diagram, the Interconnect VLAN attachment and Cloud Router is associated with Interconnect-VPC.

- In this architecture, Interconnect-VPC doesn’t require a subnet. The 10.16.0.0/24 subnet was used to validate Cloud Router advertisement towards on-prem, but it can be deleted.

- Cloud Router forms BGP peering with the ISR-2 and learns 10.1.13.0/24, it then program this dynamic route to Interconnect-VPC

- native-spoke1-VPC is peered with Interconnect-VPC, Interconnect-VPC export dynamic route 10.1.13.0/24 to native-spoke1-VPC.

- Interconnect-VPC can import native-spoke1-VPC CIDR 10.32.0.0/24, but the Cloud Router won’t advertise 10.32.0.0/24 to on-prem ISR-2. To advertise 10.32.0.0/24 to on-prem ISR-2, you must use custom advertisement option in Cloud Router, and add either 10.32.0.0/24 or super-net of 10.32.0.0/24.

- When Aviatrix Transit is launched in avx-transit-VPC, we can perform exact same step:

- Export dynamic route from Interconnect-VPC to avx-transit-VPC

- Custom advertisement of avx-transit-VPC CIDR: 10.64.0.0/24 on Cloud Router towards on-prem ISR-2

- This builds private underlay connectivity between avx-transit-VPC 10.64.0.0/24 and ISR-2 10.1.13.0/24, which allows Aviatrix Edge WAN interface to have connectivity with Aviatrix Transit Gateways.

Aviatrix overlay routing

- Aviatrix Edge then would be able to build multiple High Performance Encryption tunnels towards Aviatrix Transit gateways and obtain up to 10Gbps of combined throughput.

- From on-premise towards avx-spoke1-vpc, the traffic first reach LAN side router CSR-32, then it will gets to Aviatrix Edge Gateway LAN interface, where it will forward the traffic via encrypted tunnels to Aviatrix Transit Gateways, which in turn forward the traffic via encrypted tunnels to Aviatrix Spoke Gateways in avx-spoke1-vpc, then the traffic will gets to GCP fabric to reach destination.

- From avx-spoke1-vpc towards on-premise, the traffic first reaches GCP fabric, where Aviatrix programs RFC1918 routes point to Aviatrix Spoke Gateways, the traffic would reach spoke gateway then forwarded via encrypted tunnels towards Aviatrix Transit gateways, in which it will be again forwarded via encrypted tunnels towards Aviatrix Edge, then reaches LAN side router.

- This forms a dedicated encrypted data path from the GCP Cloud towards your on-premise network, which will prevent man in the middle attack.

- The dedicated encrypted data path is owned by the customer, and it gives the customer full ownership of visibility, troubleshooting, enterprise grade intelligence routing, advanced security features such as IDP, Distributed Firewalling as well as in the future releases deep packet inspection.

Sample terraform code for Aviatrix Transit, Spoke, VPC peering and Edge gateway

Here is a sample terraform code for:

- Create Aviatrix Transit VPC and Transit Gateways

- Create Aviatrix Spoke VPC and Spoke Gateways, and attach to Aviatrix Transit

- Create VPC peering between Aviatrix Transit VPC and Interconnect VPC

- Create Edge gateway, form BGP connectivity with LAN side router, as well as attach to Aviatrix Transit

- The following code doesn’t manage Cloud Router, you need to make sure the Cloud Router would advertise Aviatrix Transit VPC towards on-prem WAN side router

variable "region" {

default = "us-central1"

}

variable "account" {

default = "gcp-lab-jye"

}

variable "transit_gateway_name" {

default = "avx-mtt-hpe-transit-gw"

}

variable "transit_vpc_name" {

default = "avx-transit-firenet"

}

variable "transit_vpc_cidr" {

default = "10.64.0.0/24"

}

variable "transit_firenet_lan_cidr" {

default = "10.64.1.0/24"

}

variable "transit_gateway_asn" {

default = 65101

}

variable "spoke_gateway_name" {

default = "avx-spoke1-gw"

}

variable "spoke_vpc_name" {

default = "avx-spoke1"

}

variable "spoke_vpc_cidr" {

default = "10.128.0.0/24"

}

variable "interconnect_vpc_selflink" {

default = "https://www.googleapis.com/compute/v1/projects/jye-01/global/networks/vpc-interconnect"

}

variable "interconnect_vpc_name" {

default = "vpc-interconnect"

}

# Create Transit VPC and Transit Gateway

module "mc-transit" {

source = "terraform-aviatrix-modules/mc-transit/aviatrix"

version = "2.3.3"

cloud = "GCP"

region = var.region

cidr = var.transit_vpc_cidr

account = var.account

enable_bgp_over_lan = false

enable_transit_firenet = true

name = var.transit_vpc_name

gw_name = var.transit_gateway_name

lan_cidr = var.transit_firenet_lan_cidr

insane_mode = true

instance_size = "n1-highcpu-4"

local_as_number = var.transit_gateway_asn

enable_multi_tier_transit = true

}

# Create Aviatrix Spoke VPC and Spoke Gateway

module "mc-spoke" {

source = "terraform-aviatrix-modules/mc-spoke/aviatrix"

version = "1.4.2"

cloud = "GCP"

region = var.region

cidr = var.spoke_vpc_cidr

account = var.account

name = var.spoke_vpc_name

gw_name = var.spoke_gateway_name

transit_gw = module.mc-transit.transit_gateway.gw_name

}

# Create VPC peering from Avx MTT HPE Transit Firenet VPC to

resource "google_compute_network_peering" "mtt_transit_firenet_to_interconnect" {

name = "${module.mc-transit.vpc.name}-to-${var.interconnect_vpc_name}"

network = "https://www.googleapis.com/compute/v1/projects/${split("~-~",module.mc-transit.vpc.vpc_id)[1]}/global/networks/${split("~-~",module.mc-transit.vpc.vpc_id)[0]}"

peer_network = var.interconnect_vpc_selflink

export_custom_routes = true

import_custom_routes = true

}

resource "google_compute_network_peering" "interconnect_to_mtt_transit_firenet" {

name = "${var.interconnect_vpc_name}-to-${module.mc-transit.vpc.name}"

network = var.interconnect_vpc_selflink

peer_network = "https://www.googleapis.com/compute/v1/projects/${split("~-~",module.mc-transit.vpc.vpc_id)[1]}/global/networks/${split("~-~",module.mc-transit.vpc.vpc_id)[0]}"

export_custom_routes = true

import_custom_routes = true

}

# WARNING

# MAKE SURE CLOUD ROUTER ADVERTISE ADDITIONAL TRANSIT CIDR

# WARNING

module "interconnect_edge" {

source = "terraform-aviatrix-modules/mc-edge/aviatrix"

version = "v1.1.2"

site_id = "interconnect"

edge_gws = {

gw1 = {

# ZTP configuration

ztp_file_download_path = "/mnt/c/gitrepos/terraform-aviatrix-gcp-spoke-and-transit"

ztp_file_type = "iso"

gw_name = "interconnectgw1"

# Management interface

management_interface_config = "DHCP"

# management_interface_ip_prefix = "172.16.1.10/24"

# management_default_gateway_ip = "172.16.1.1"

# DNS

# dns_server_ip = "8.8.8.8"

# secondary_dns_server_ip = "8.8.4.4"

# WAN interface

wan_interface_ip_prefix = "10.1.13.2/24"

wan_default_gateway_ip = "10.1.13.1"

wan_public_ip = "149.97.222.34" # Required for peering over internet

# Management over private or internet

enable_management_over_private_network = false

management_egress_ip_prefix = "149.97.222.34/32"

# LAN interface configuraation

lan_interface_ip_prefix = "10.1.12.2/24"

local_as_number = 65010

# prepend_as_path = [65010,65010,65010]

# spoke_bgp_manual_advertise_cidrs = ["192.168.1.0/24"]

# Only enable this when the Edge Gateway status shows up, after loaded ZTP ISO/CloudInit

bgp_peers = {

peer1 = {

connection_name = "interconnect-peer"

remote_lan_ip = "10.1.12.250"

bgp_remote_as_num = 65020

}

}

# Change attached to true, after the Edge Gateway status shows up, after loaded ZTP ISO/CloudInit

# Attach to transit GWs

transit_gws = {

transit1 = {

name = module.mc-transit.transit_gateway.gw_name

attached = false

enable_jumbo_frame = false

enable_insane_mode = true

enable_over_private_network = true

# spoke_prepend_as_path = [65010,65010,65010]

# transit_prepend_as_path = [65001,65001,65001]

}

}

}

}

}

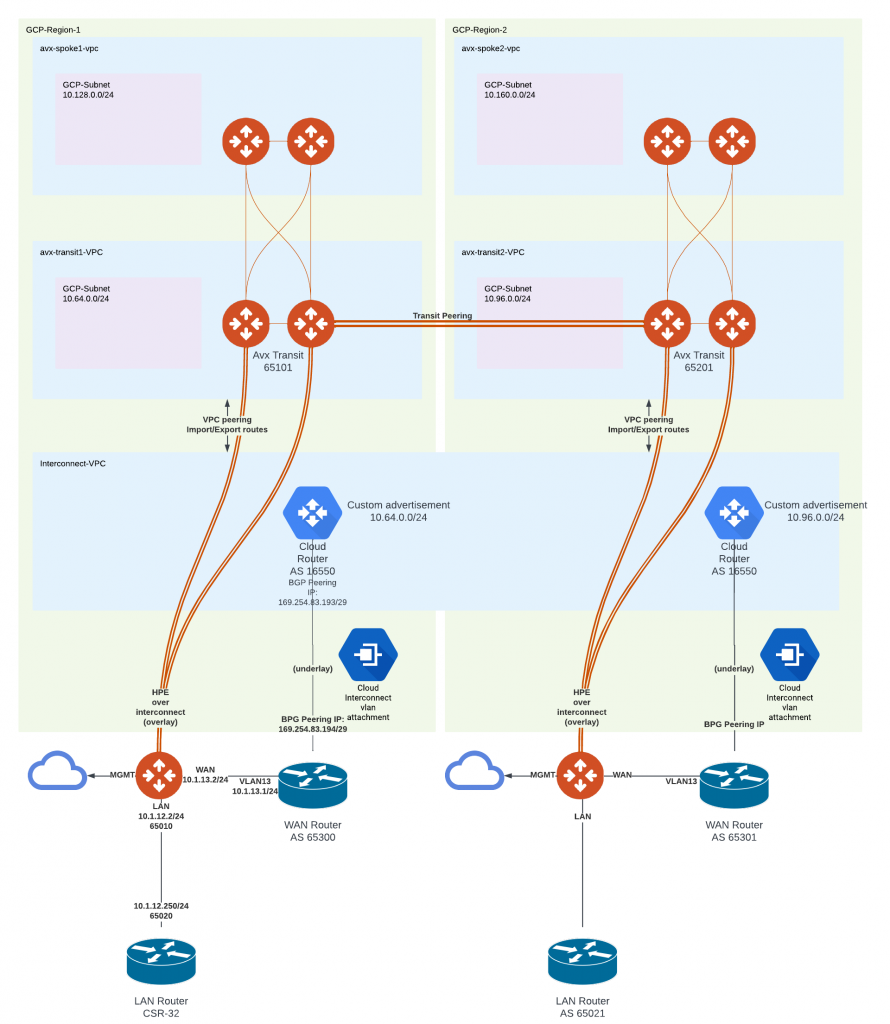

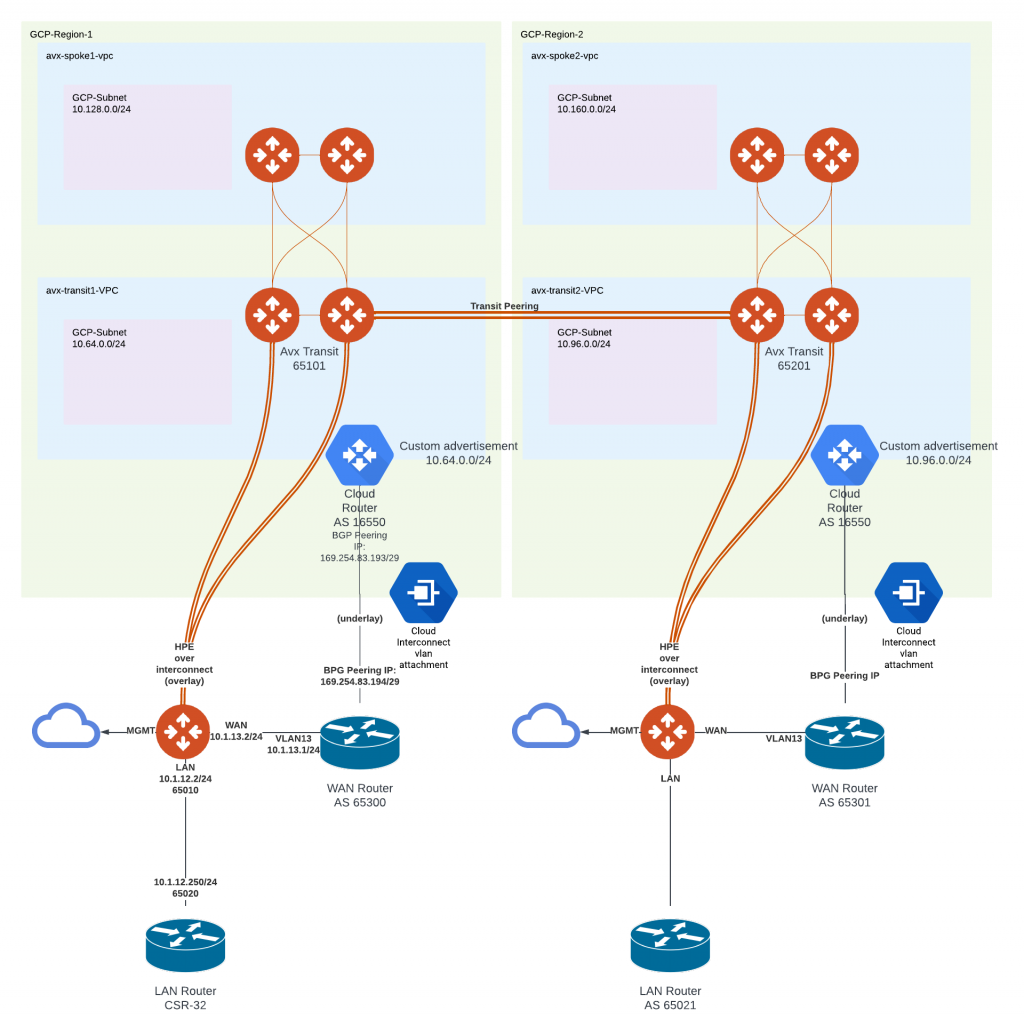

Multi-region deployment architecture

- Brownfield scenario, where customer already have Interconnect deployed.

- They may have one or multiple VPCs where the VLAN attachment is associated with.

- The multi-region architecture is similar to what have discussed above, where transit VPCs are peering with corresponding interconnect VPC in the corresponding region.

- In the case where a single interconnect VPC terminating multiple VLAN attachments from multiple regions, transit VPCs will all peer with the same interconnect VPC.

- Notice that there’s no need to have a subnet in the Interconnect VPC

- An Aviatrix Transit Peering connection can be established to provide east to west traffic flow within GCP.

- In case of greenfield deployment, we can have Interconnect VLAN attachment land directly on corresponding Aviatrix Transit VPC in the corresponding region.

- You can think the Aviatrix Transit VPC is the formal Interconnect VPC, however in this architecture, it does require a subnet for the Aviatrix Transit to place it’s eth0 to.

- In this architecture, when Aviatrix Spoke is using High Performance Encryption to attach to Aviatrix Transit, need to make sure the VPC peering connection are not configured to import/export routes, so the underlay dynamic routes learned from Cloud Router won’t get programmed in spoke VPC routing table.

If there is no Aviatrix Edge, how to connect with the on-premise router via overlay? Interconnects are connected, and I understand that GCP does not support GRE.

Is there any way to connect on-premises without using Edge? GCP supports GRE, but Aviatrix doesn’t seem to support GCP’s GRE yet. Should IPSEC VPN be used as an overlay?

I haven’t gotten the chance to write up / validate some other potential architectures. One would be using Cloud Router + Cloud Interconnect to create connectivity to onprem, then create BGP over LAN full mesh between Aviatrix Transit to Cloud Router. Another one would be having some sort of IPSec/BGP connectivity between onprem device directly to Aviatrix Transit, or utilize Cloud VPN/ Network Connectivity Center/Cloud Router to achieve similar result.